A Deal with Conscience at a Hackathon, or 'How to Win with Non-Working Code?' One Team's Story

A hackathon team exposes how a competitor won first place at a government-sponsored hackathon with a completely non-functional prototype — just a pretty frontend facade with no working backend, ML, or data processing.

Disclaimer

Everything described here is the subjective opinion of the author, based on personal experience. All links to code and websites are publicly available.

Introduction

Every one of us has thought about participating in a hackathon at some point. The romance of it — code, energy drinks, and the idea of changing the world for the better. But not everyone knows what goes on behind the scenes at such events, especially when the clients are government organizations.

This story began last autumn, when five friends decided to enter the battlefield for the sake of an idea: improving people's lives. We assembled a team, analyzed the available tracks, and settled on the hackathon "Leaders of Digital Transformation."

Our team had participated in this competition before and, in a fairly honest fight, took second place. Over the past year, we'd done our homework: improved our internal team processes, brushed up on the fundamentals, and were ready for a rematch.

The Task and Preparation

We chose the track from the Moscow Department of Housing and Communal Services (DZhKKh).

The task: Create a recommendation service for predicting the occurrence of technological incidents.

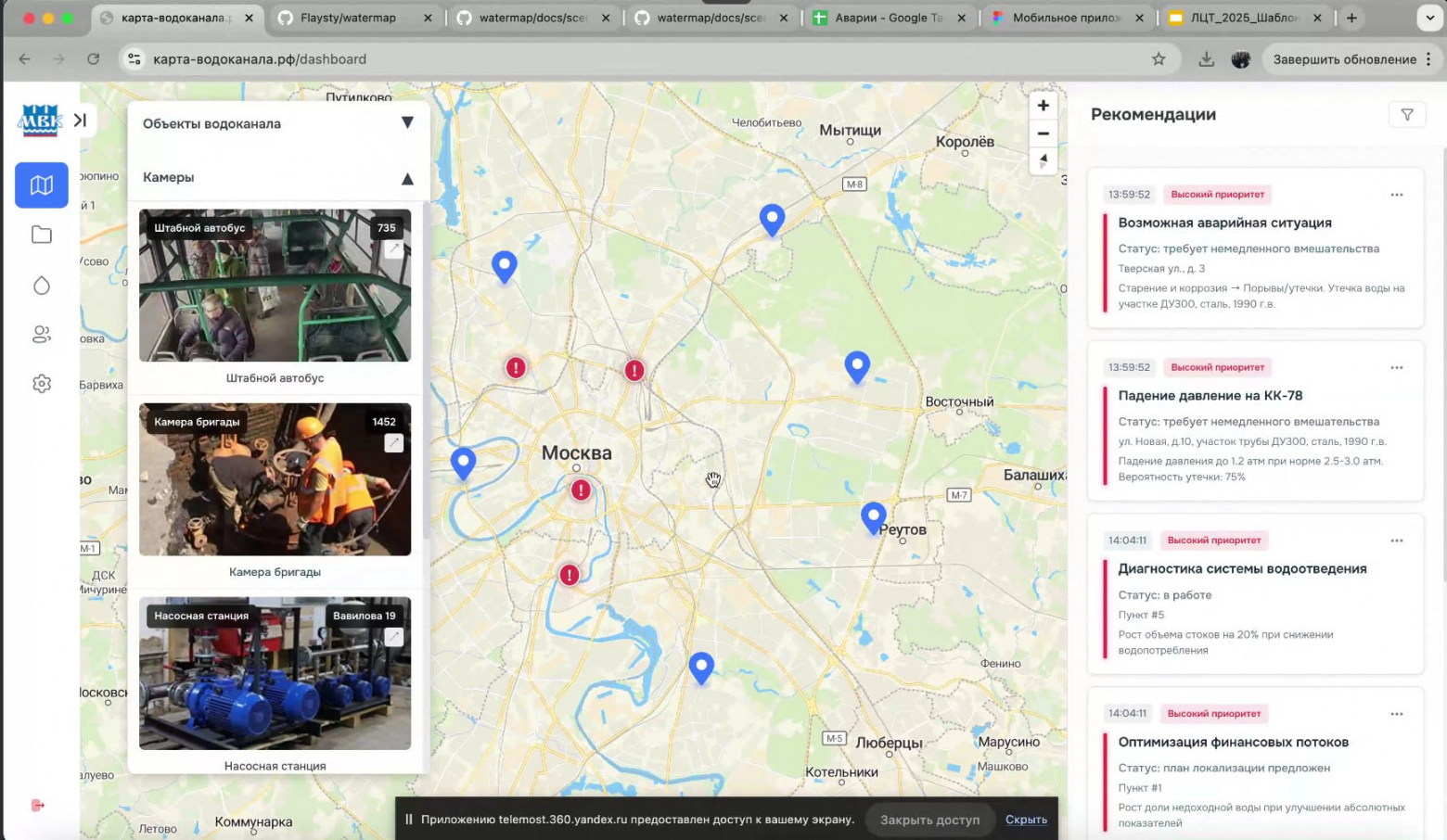

In short: We needed a map with dashboards where a dispatcher could quickly identify failures in water supply networks.

A great business problem with perfectly clear goals. Without much hesitation, we got to work. Last time we'd had shortcomings due to the lack of a strong frontend developer. This time we found a valuable team member who could cover that area, and we sat down to work.

Two weeks of fierce battles with code, Docker containers, data, and a heap of technical difficulties.

A hackathon's purpose, as participants see it:

- Improve processes and people's lives.

- Save the organization money on developing a prototype.

- Receive compensation for the work done (if the solution genuinely helps the company).

Drawing on experience, we began distributing tasks, designing data processing pipelines, building schemas, and thinking through the service logic. Two weeks without sleep, different prototypes, frontend variants, integrations, DevOps deep dives, and learning the specifics of Moscow's water utility operations.

Finally, the solution was assembled, launched, and debugged. We tested it across various scenarios: modeled emergency situations, built a water supply map of districts from heating network points, found building addresses, configured animations and dashboards. We cross-referenced against the requirements — and confirmed we'd completed the majority of them.

The last night before the deadline is always sleepless. Everyone's nervous, things break at the last moment, you fix on the fly. And then the solutions are submitted for review.

The Pitch Session and First Warning Signs

A few days pass. Teams present their solutions at an open pitch session. Time is regulated, everything is serious. We watch other teams' presentations, see interesting approaches, different levels of preparation. But one solution stands out... in a strange way.

The entire presentation by team "Karta-Vodokanalа" (Water Utility Map) felt like watching a kindergarten teacher perform. It felt like the word "cringe" was invented for exactly this moment.

The team's presenter opened with the words: "We have friends at DZhKKh."

Everyone tensed up. We didn't listen to any other team as attentively as this one.

First: when a situation like this arises, you wonder what the point of the competition is if the team has "connections" at the client organization.

Second: the presenter mentioned that they had already served as a contractor nine times for the "Karta-Ofisa" (Office Map) service and various water utility services.

Question... Let's save it for later.

We continued listening to the presentation in the format of: "Dear children, look at these beeeautiful buttons, look how amaaaazing we are."

Visually, the service did look like a strong competitor, outpacing other solutions by two steps in many respects. But the essence of a hackathon lies in solving a real problem, not in creating a pretty picture, right? Right?

Then during the presentation we see screenshots of a page with a Moscow map and points marked as addresses with problems. And we immediately freeze: these are completely random points that were NOT in the dataset AT ALL. We thought, well, maybe we're seeing things.

Then on the same screenshot we notice an IPTV video surveillance block. Interesting solution... But how does it work? Why do the images from these cameras show water supply pumps sitting on transport pallets in a warehouse? Could this have been obtained as part of simple hackathon participation? Very strange.

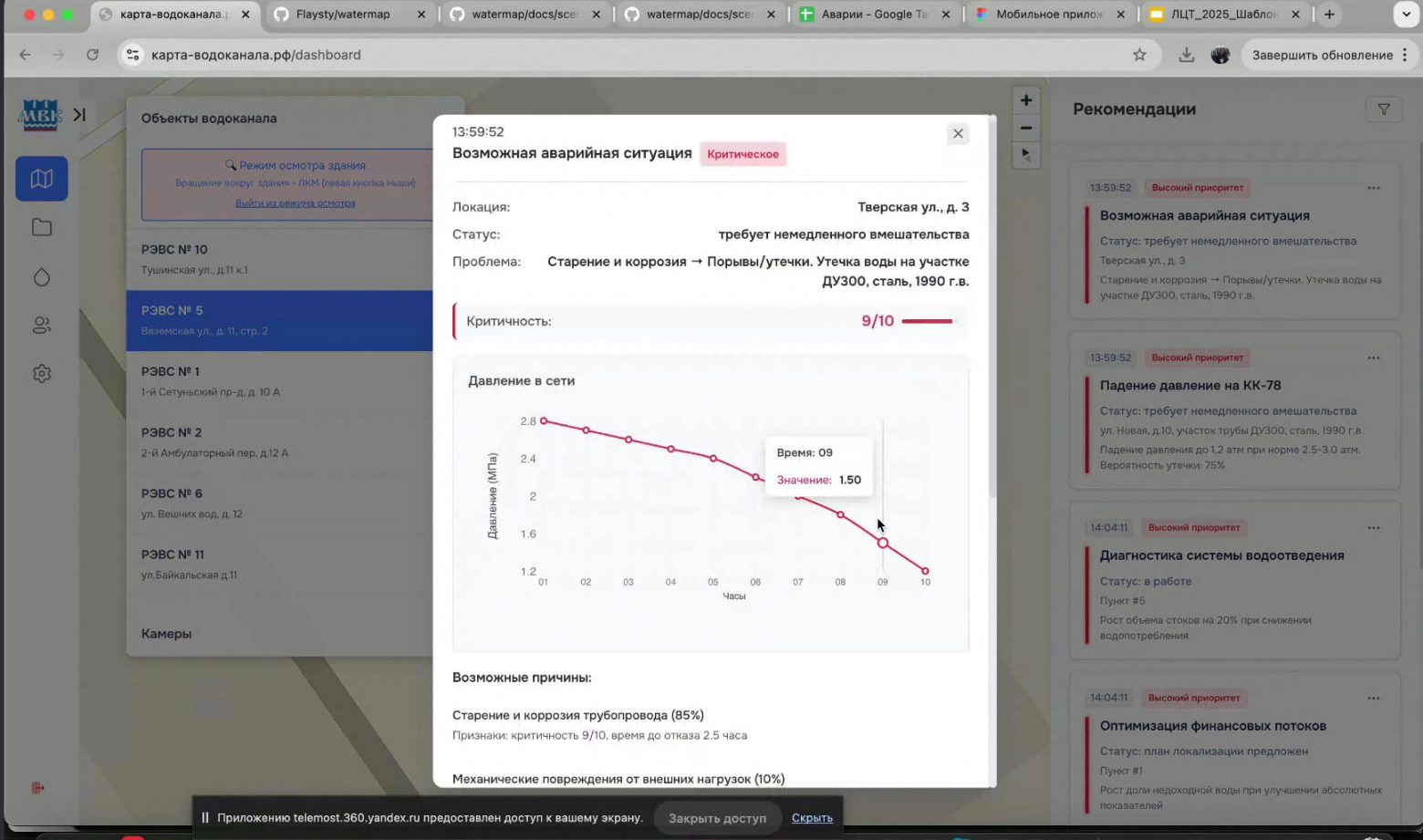

Then we see weird screenshots with a prediction suggesting that a water supply pipe should corrode within two hours... Amusing. We keep watching.

The presenter then declares that their team used an LLM for predicting leaks in water supply systems. WHAT?!

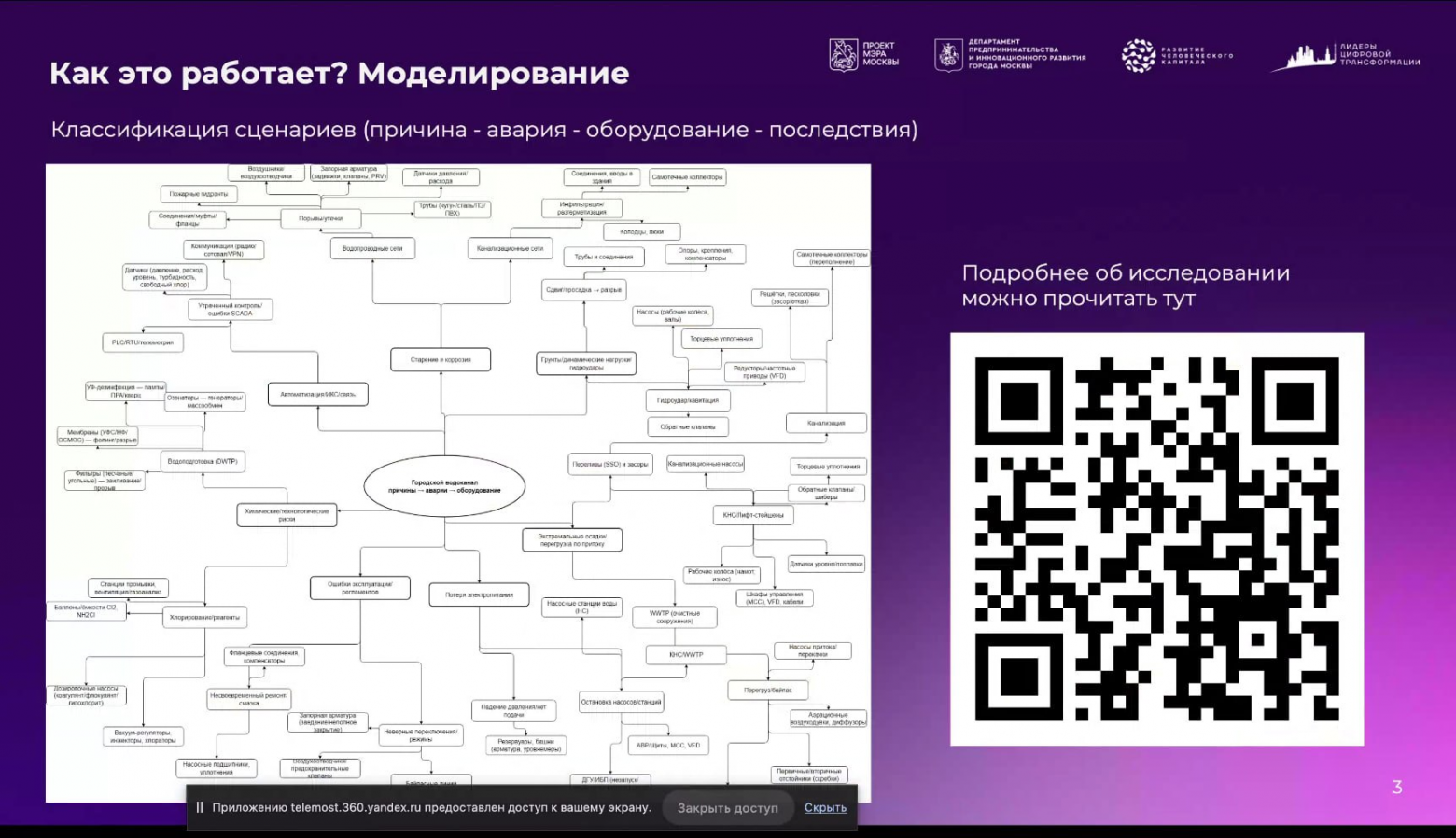

Then we're shown a beautiful and absolutely comprehensible (to any schoolchild) diagram of emergency scenario types. All in an extremely user-friendly and clear format (not).

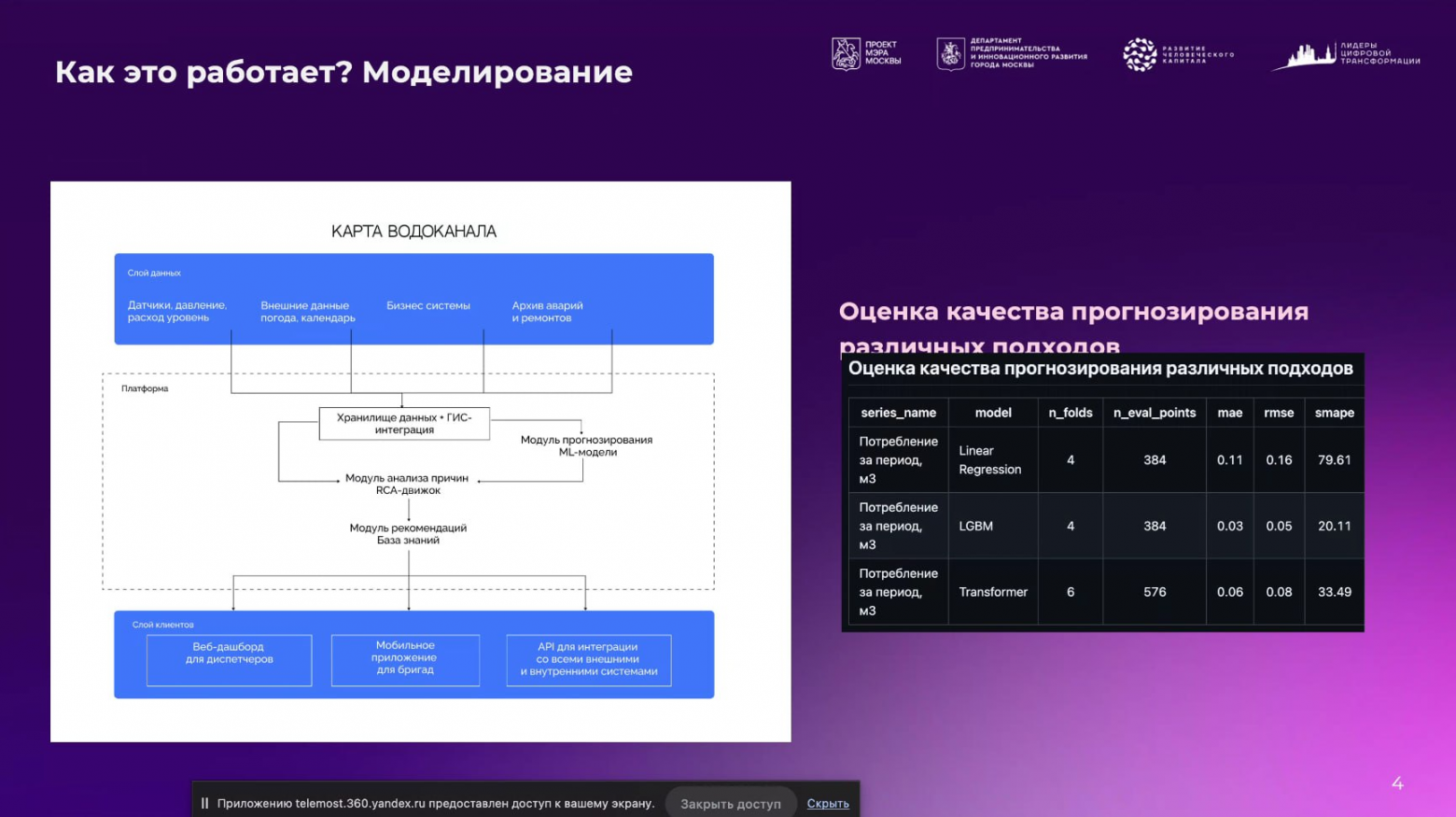

The next slide covers the technical implementation of the service. Let's note the claimed architecture:

- Data storage + GIS integration.

- Prediction module with ML models.

- Root cause analysis module (RCA engine).

- Recommendations module (Knowledge base).

Remember this. We'll need it later.

Technical Post-Mortem: Metrics and Reality

Now let's smoothly transition to the ML model metrics presented on the slide:

- Linear Regression: 4 folds, 384 points.

- LGBM: 4 folds, 384 points.

- Transformer: 6 folds, 576 points.

What do we see? We observe a suspicious misrepresentation of results (if they're even real).

1. Model comparison is invalid. For a valid comparison, all models must be evaluated on the same folds. Different numbers of eval_points means the Transformer was tested on a different dataset.

2. The SMAPE error (Symmetric Mean Absolute Percentage Error) for linear regression is 79.61%, indicating a total failure of the model. The Transformer situation is similar: 33% is a poor result. Why would you even present this?

For reference: SMAPE typically ranges from 0 to 200%, where 0% is ideal, and values above 20% are considered low-accuracy.

3. Contradictions in metrics. MAE/RMSE are very low, while SMAPE is far too high. This points to one of the following issues:

- Data wasn't scaled for SMAPE.

- MAE/RMSE were computed on normalized data.

- OR the data contains near-zero values, causing SMAPE to "explode."

Looking at the task, we need to build a time series and make a forecast. WHY would you use linear regression for this task? — an open question.

Based on what we learned about their models, several questions arose:

- Why different numbers of folds? This is a red flag for development quality.

- Why did the Transformer perform 1.6x worse than LGBM on SMAPE? Was it even properly tuned?

- What's the data scale? If consumption is in m³ (typically 10–1000), then MAE=0.03 is suspiciously small.

- Why is there no Transformer in the final solution? Probably because the team realized it was ineffective.

We saved these questions for the post-awards meeting. But we had no idea how that would go.

At the end of the presentation, the jury commented: "You have the best scenario-based approach, among the best of all."

We won't belabor the point that such comments shouldn't be made during pitch sessions. But I'm more than confident that this statement crushed any hopes other teams had of getting their solutions approved for production use or even a top placement.

Investigation: What's Under the Hood?

After the pitch session, we gathered as a team for discussion. First thing, we examined the "karta-ofisa.rf" solution.

The main page of this service displays a decent collection of awards from various hackathons and events:

- "Interactive Company Structure Map" module for the "Office Map" system.

- Professional development certificate for their commercial director.

- Winner diploma from AI Product Hackathon on the NLMK track.

- Winner diploma for "Administrative Director 2023" award.

- "Office Map" — winner of the Skolkovo accelerator.

By now the list has grown to include a diploma from this hackathon:

Team "Karta Ofisa" has been awarded the Mayor of Moscow Prize for a digital solution as part of the "Leaders of Digital Transformation" award.

Since we saw a pretty picture at the presentation, we immediately wanted to test the team's solution. It remains publicly available at: watermap.ofmaps.ru.

Testing results raised a LOT of questions about the product:

1. Half the functionality doesn't work. Buttons are present, but they literally do nothing. No REST requests to the backend, no frontend actions either.

2. The video camera functionality consists of embedded static photographs with no actual functionality.

3. The recommendations block is effectively non-functional. All data is static and hardcoded on the frontend. There is no real interaction with the backend.

4. Accident prevention action plan. After clicking "Approve Plan," we see a uniform animation simulating critical server operations: "AES algorithm in use," "Generating signature," "GOST certificate compliance," etc. After which we can sign the document. Again we see a beautiful animation with nothing behind it. No backend communication whatsoever.

5. The monitoring page doesn't function at all.

6. The "brigades" page doesn't function — it's a hardcoded frontend. Buttons for editing data, adding employees, and deleting brigades don't work.

7. The settings page (apparently for simulating emergency situations) has numerous parameters and sliders... But changing them leads to nothing. Clicking "Save Changes" produces a popup saying settings are saved, but nothing is sent to the backend.

In its current implementation, this is a functionality imitation that operates solely on the frontend side. Here's the actual code we found:

const p = () => {

console.log("Сохранение настроек:", e),

alert("Настройки сохранены!")

}An interesting solution, and most importantly — how reliably it works.

We couldn't believe our eyes and went searching for this team's GitHub. And we found it, thanks to Yandex search!

- Original GitHub: https://github.com/Flaysty/watermap

- Our fork (to preserve a checkpoint): https://github.com/xEnotWhyNotx/watermap

Anyone can verify my words and check the functionality of this service themselves.

After studying the repository from top to bottom, it turned out that the frontend barely interacts with the backend at all. The ML module is commented out and also has no connection to the service. The solution is nothing more than pretty buttons and text filler on pages.

If the solution is being evaluated by technical specialists who will implement the product in their infrastructure, they are obligated to notice that the solution is invalid and will not work in any way. Not from the perspective of interaction logic, not from the ML side, not from the standpoint of common sense. It's a placeholder, not a service.

Let's go through the checklist of their architecture as stated on the slide:

- Data storage + GIS integration — absent.

- Prediction module with ML models — exists, but practically all code is commented out and not implemented.

- Root cause analysis module (RCA engine) — absent.

- Recommendations module (Knowledge base) — absent.

Attempt to Seek Justice

Alright, we conferred as a team, filed a request with the jury to verify the solution's functionality and compliance with the requirements, and decided to wait for the awards ceremony.

Our request read:

"Hello! We are team GigaWin. We'd like to ask whether solutions are being evaluated from a technical standpoint and whether there will be an additional defense round. During the pitch session, we listened to team 'Karta Vodokanalа' and noticed that their solution is non-functional, barely works with data, and is essentially an interface template. We examined their repository and found that the service doesn't process data but uses a pre-made template. We were concerned by the positive evaluation from jury members. Since the goal of the competition is to develop a functional prototype, we'd like to clarify whether there will be an additional pitch session or another opportunity for participants to demonstrate the degree of their solution's development."

The response we received:

"Solutions are evaluated by jury members, which include both technical specialists and the business client. Each solution is examined from all angles, taking into account a degree of uncertainty that arose with the datasets. We will separately verify the teams' solutions, but in any case, the vote of any one part of the jury is not decisive — that's the point: everyone evaluates from their own perspective, and scores are combined. In any case, we'll look into this, thanks for the info!"

All that's left is to wait... We received no further clarifications or answers.

Meeting with the Client and the Finale

An hour before the awards ceremony, teams can meet with the case holders. We gladly attended to ask questions about our solution and chat with other participants. Only one representative from DZhKKh showed up, despite many being announced. We talked — turned out to be a great person, we found common ground, clarified some points.

But throughout the entire meeting, the "Karta-Vodokanalа" team kept disrupting things. Their captain constantly tried to extract contact information from the DZhKKh specialist and "sold" their solution, pushing for contacts to secure further contract work. In normal business life, this is perfectly fine, but during a Q&A session — it's inappropriate, especially with that level of pushiness.

We then started asking the team captain pointed technical questions, since he claimed to be the chief technical specialist who knew everything inside and out. After the very first clarifying questions about THEIR OWN service, the "chief technical specialist" crumbled. Not a single question received a proper answer. The responses we did get were in the format of: "you don't understand, you don't know how, but we can."

We didn't let them "sell" the karta-ofisa.rf product, which caused considerable displeasure from the team.

At the awards ceremony, first place went to... I think everyone can guess which team. Karta-Vodokanalа.

After the ceremony, I couldn't contain myself and went to ask questions of their chief technical person. After my very first question, I was told — in crude terms — to go away along with my team, with a recommendation not to bother them. Having easily parried this, I continued with further questions, which the team refused to answer — confirming the lack of competence on the subject. The team captain started flaunting his Kaggle achievements. But for some reason, this "grandmaster" (by his own claim) couldn't answer basic questions about their ML implementation or standard ML questions in general, which once again cast doubt on this person's competence.

Conclusions and Boycott

After the hackathon ended, our team decided that participating in tracks from government organizations risks having first place "sold" to the case holder's contractors. After talking with other teams, we only became more convinced. Far from just our team faced this same injustice.

Honestly, when contracting organizations of the case holder enter the competition — the entire point of the competition is lost. The desire to create something that actually works and improves people's lives disappears. A heavy feeling sets in that everything around you is "bought" and there's nothing to do here. It's all "insiders" everywhere, money laundered on non-existent solutions with a pretty cover and presentation. Everything around is a facade.

Our team has decided to boycott such events and participate exclusively in honest competitions. Let the final placing not be at the top, but it will be honest and earned in a genuine battle with smart people, not with sellers of conscience.

If you want to make money, friends, it's better to just get a job. And if you want to compete, prove your excellence, and improve the world — try your hand at competitions not connected to the government sector.

This turned out to be one of my first posts. But I can't stay silent about this. The whole situation is very unpleasant. And it's unpleasant not because of the money, but because of the injustice and dishonesty. Our team took third place. And I feel sorry for the DS Crafters team, who took second place despite having every chance to fight with us for first and second. After all, it's far better to compete against working services that actually solve real problems rather than against stubs designed for a pretty picture.

Important: This post does not use any names or contact information of the "Karta-Vodokanalа" team members. These individuals themselves provide their contacts on their official website under the "About" section at karta-ofisa.rf/about. These individuals are not shy about selling their solutions under the brand name OOO "Sfera Bitubi."

Unfortunately, having seen this team's approach to work and sales, a clear opinion has formed. In the future, if circumstances bring me to own a major product or run a business, I would never engage this organization.

Frankly, I'm amazed that the team wasn't disqualified for such violations during the hackathon, as this is a direct conflict of interest. There is no more obvious example.

Good luck to everyone in competitions, may you achieve worthy results and have the courage to speak the truth as it really is!