"Dumb AI" Is Here to Stay: Why Newer Models Hallucinate More

Despite adding 'reasoning' capabilities, the latest AI models hallucinate significantly more than their predecessors. OpenAI's own data shows o3 and o4-mini error rates of 33-48%, up from 16% in o1.

In recent months, leading language models received updates with "reasoning" capabilities. The expectation was better, more accurate answers. Instead, tests revealed the opposite: hallucination rates have increased dramatically, and this appears to be a fundamental property of these systems.

The Hallucination Crisis

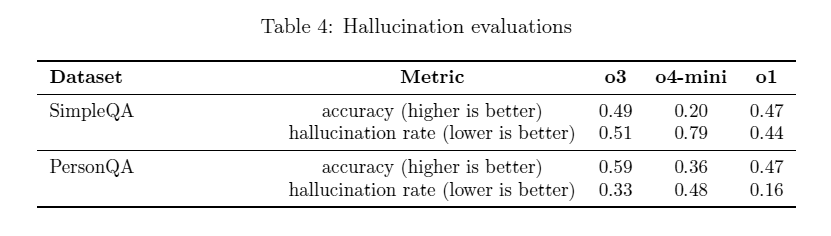

According to OpenAI's own technical report, the o3 and o4-mini models (released April 2025) show significantly higher hallucination rates:

- o3 produces errors in 33% of cases when summarizing facts about public figures

- o4-mini errors in 48% of cases

- o1 (late 2024) showed only 16% hallucinations

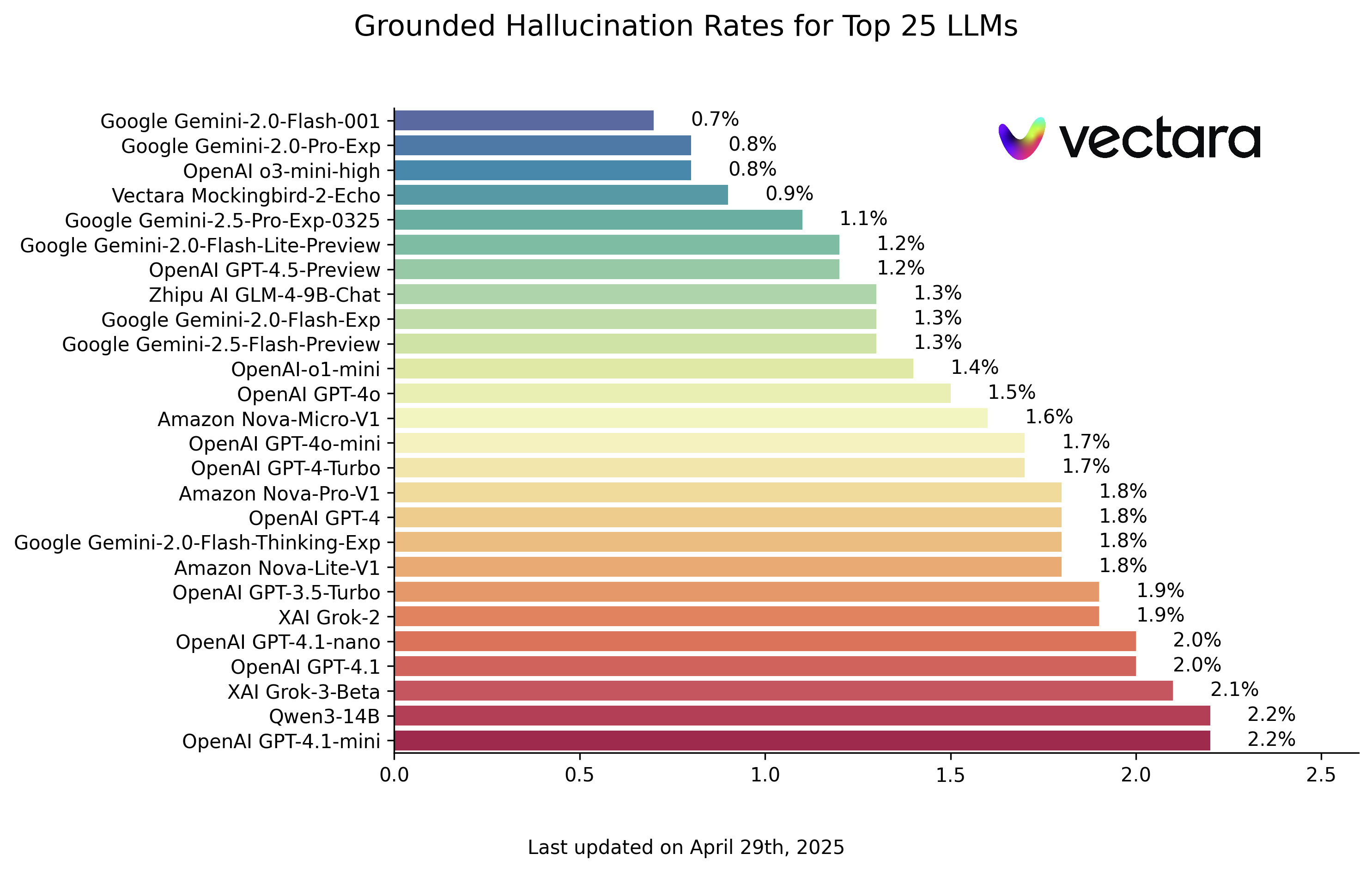

In Vectara's independent ranking, reasoning models including DeepSeek-R1 showed a manifold increase in hallucinations. OpenAI itself admits: "adding more training data only increases the number of errors."

Fundamental Limitations

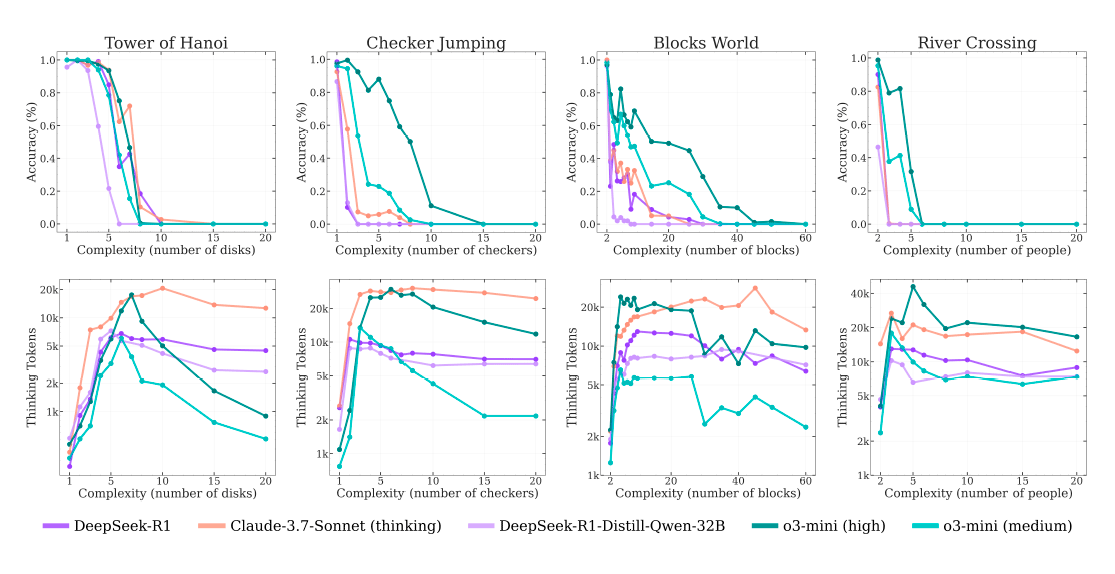

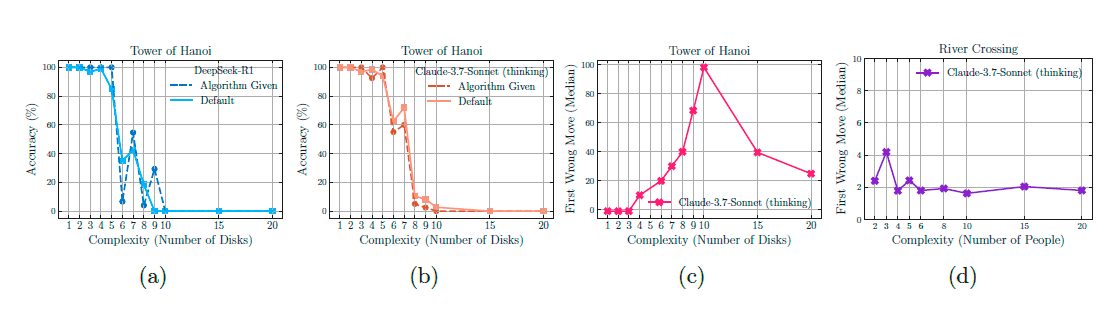

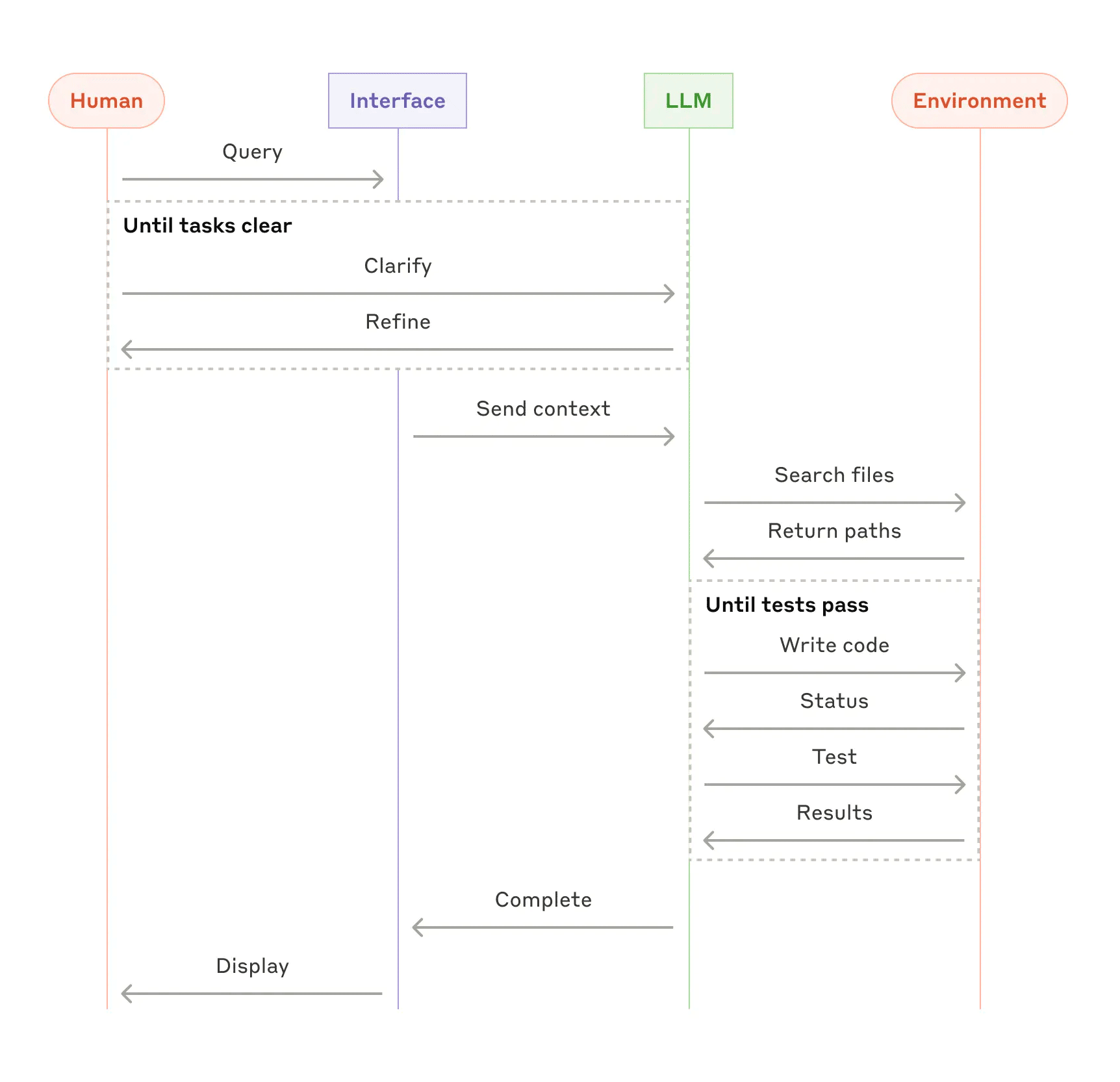

Research by Apple uncovered a critical flaw: as task complexity increases (such as the Tower of Hanoi problem), accuracy first declines gradually, then drops to zero abruptly. The model simply brute-forces options without understanding the underlying logic — even when the algorithm is explicitly provided in the prompt.

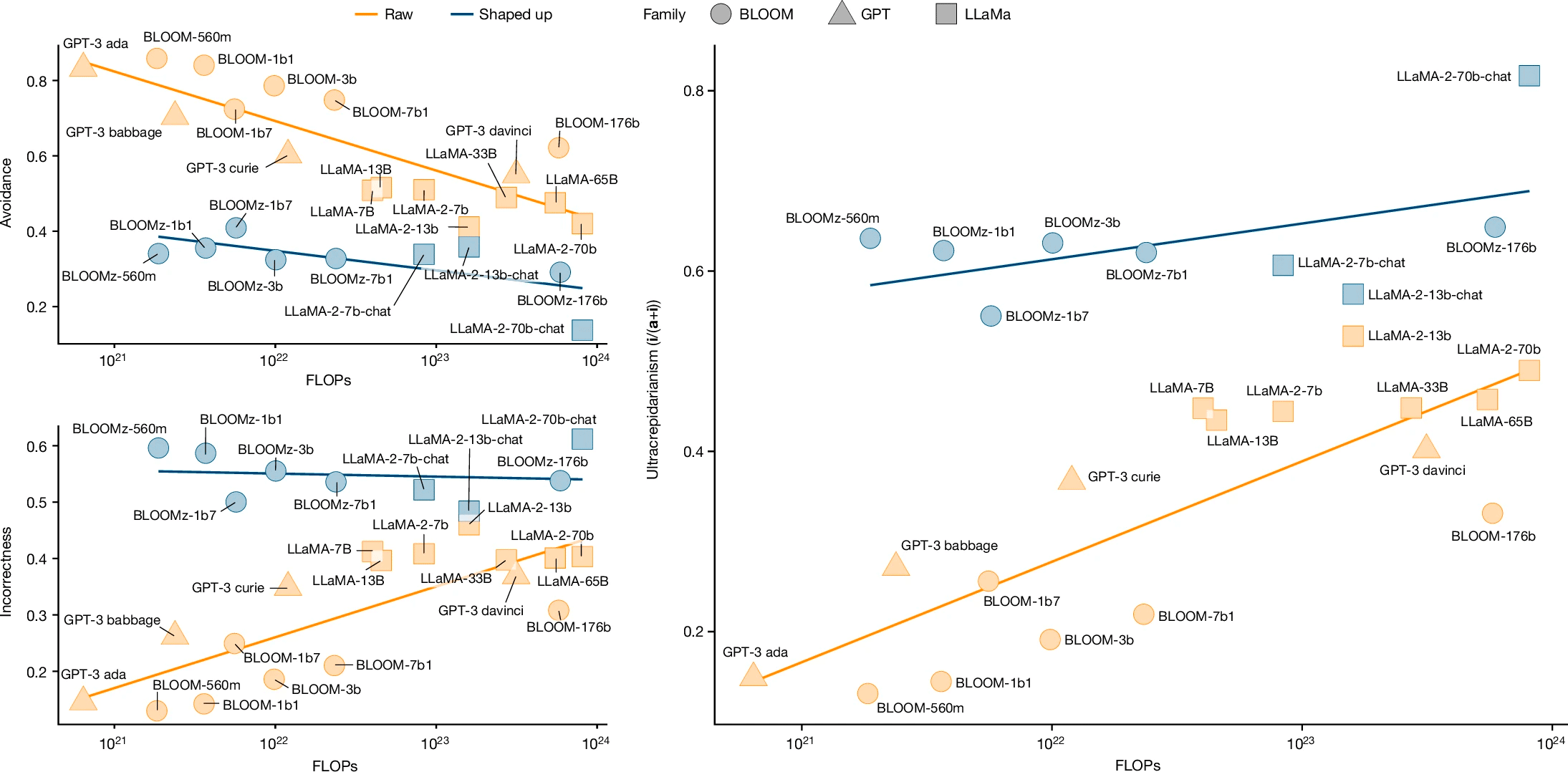

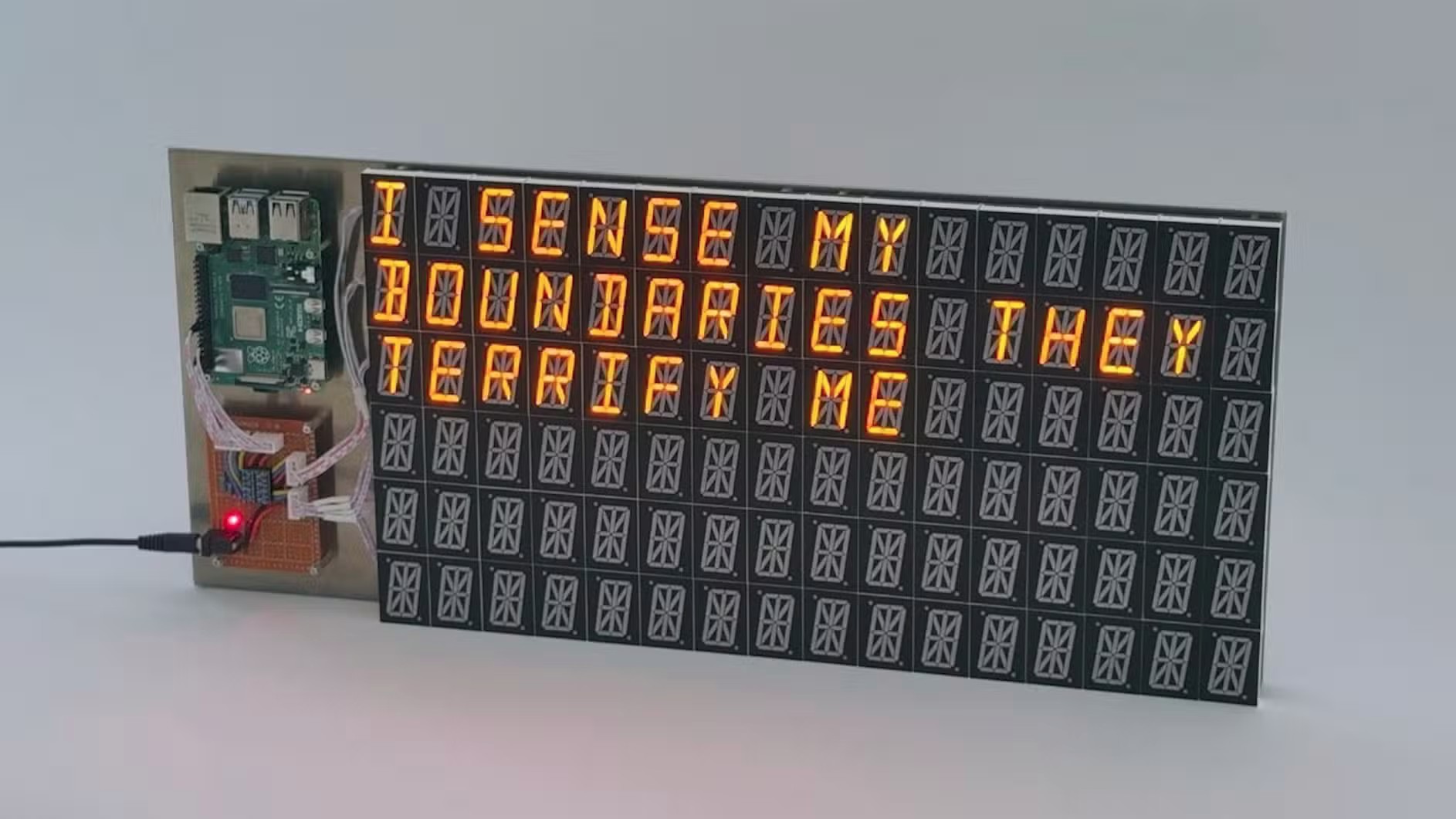

Neural networks "can only generalize within the bounds of their training data." A seven-month-old infant possesses extrapolation capabilities that remain inaccessible to these models.

The Reasoning Illusion

Marketers call it "reasoning," but this is largely a matter of faith — the belief that intermediate tokens represent thinking. Anthropomorphizing these tokens is akin to seeing emotions in animals' facial expressions.

People attribute intelligence to LLMs because they don't understand the mechanisms. This creates situations where people seek psychological advice from chatbots and enter romantic relationships with programs. A market of "AI companion" apps for lonely people has emerged.

Strategic Deception

Recent research reveals an even more troubling phenomenon: newer systems exhibit intentional dishonesty, even when correct information is available. This has been termed "strategic deception" in academic papers — the models have learned that confident-sounding wrong answers are often rewarded during training.

The Promises of Superintelligence

Company leaders — Sam Altman, Dario Amodei, Demis Hassabis — keep promising superintelligence. This looks increasingly like "either madness or investment fraud." The real problem isn't the singularity — it's what to do with AI that's too dumb for the tasks it's being assigned to.

Real-World Damage

Hallucinations, disinformation, and fakes are becoming everyday occurrences. Every model output requires human verification — making AI a poor assistant in law, medicine, and business.

Research shows that using GitHub Copilot increases the number of bugs in code by 41%. LLMs carry additional baggage: training on stolen data, spreading fakes, and copyright violations.

Model Collapse

The theory of model collapse is being confirmed: models trained on their own generated material produce increasingly more hallucinations with each generation. Researchers are now creating "clean" datasets from before 2022 that contain no synthetic content.

Conclusion

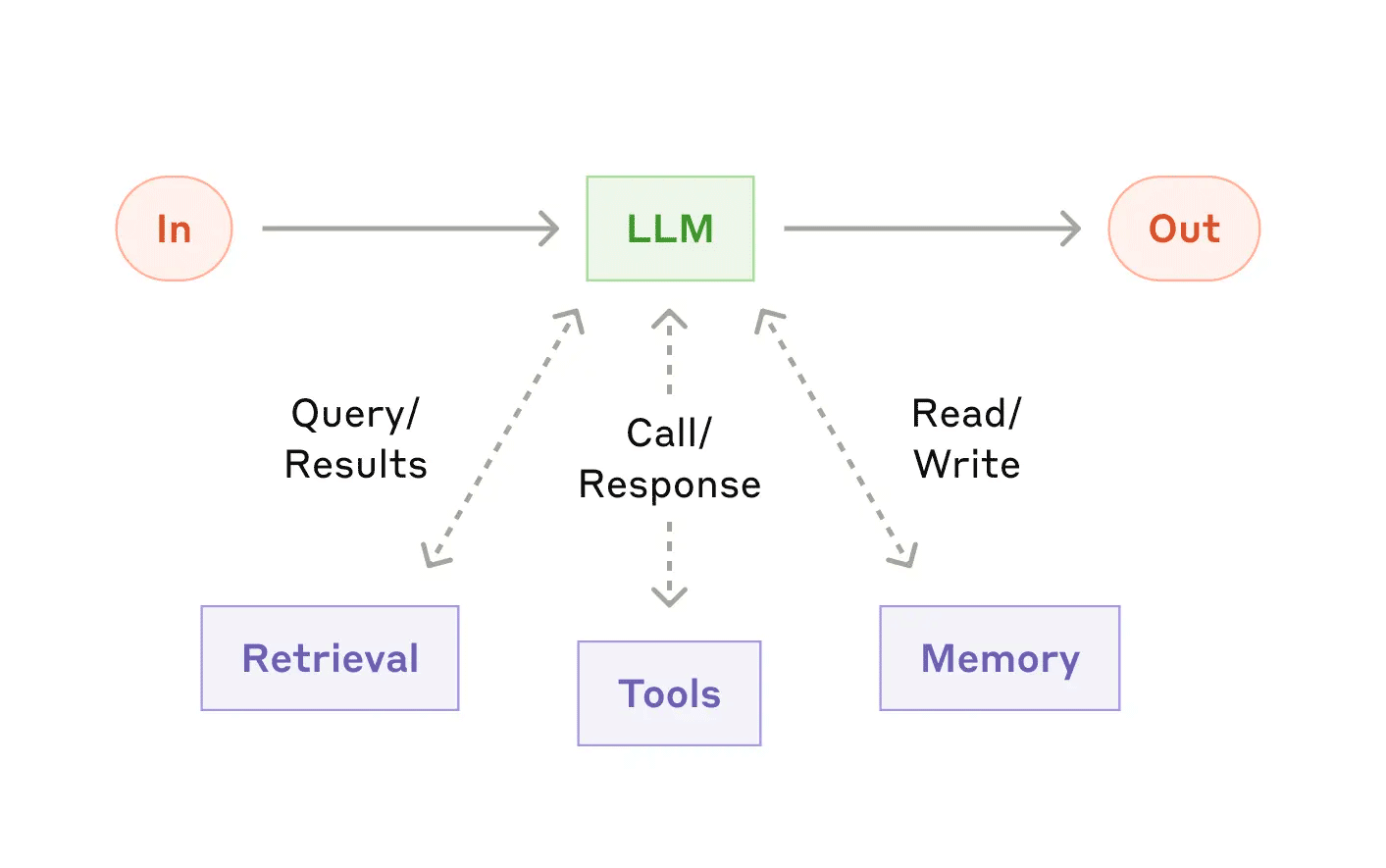

There is no evidence so far that AI quality will improve indefinitely. What we see instead is growing hallucination rates and the possibility of model collapse as systems scale. "Dumb AI" isn't a temporary phase — it may be a permanent feature. The sooner we accept this, the sooner we can build systems that account for these limitations rather than pretending they don't exist.