Fear and Loathing of Vibe Coding: How I Made a Game for My Kid and Hit the Top of Android TV Apps

A data lead at MTS Web Services uses AI assistants to build a maze game for his daughter, navigating hallucinations, race conditions, and API integration nightmares before hitting the Android TV top charts.

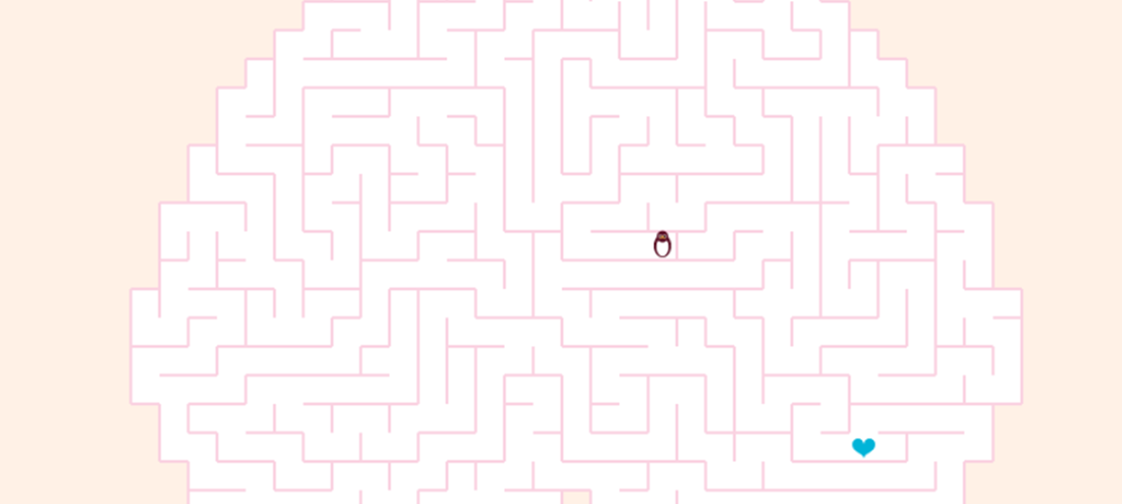

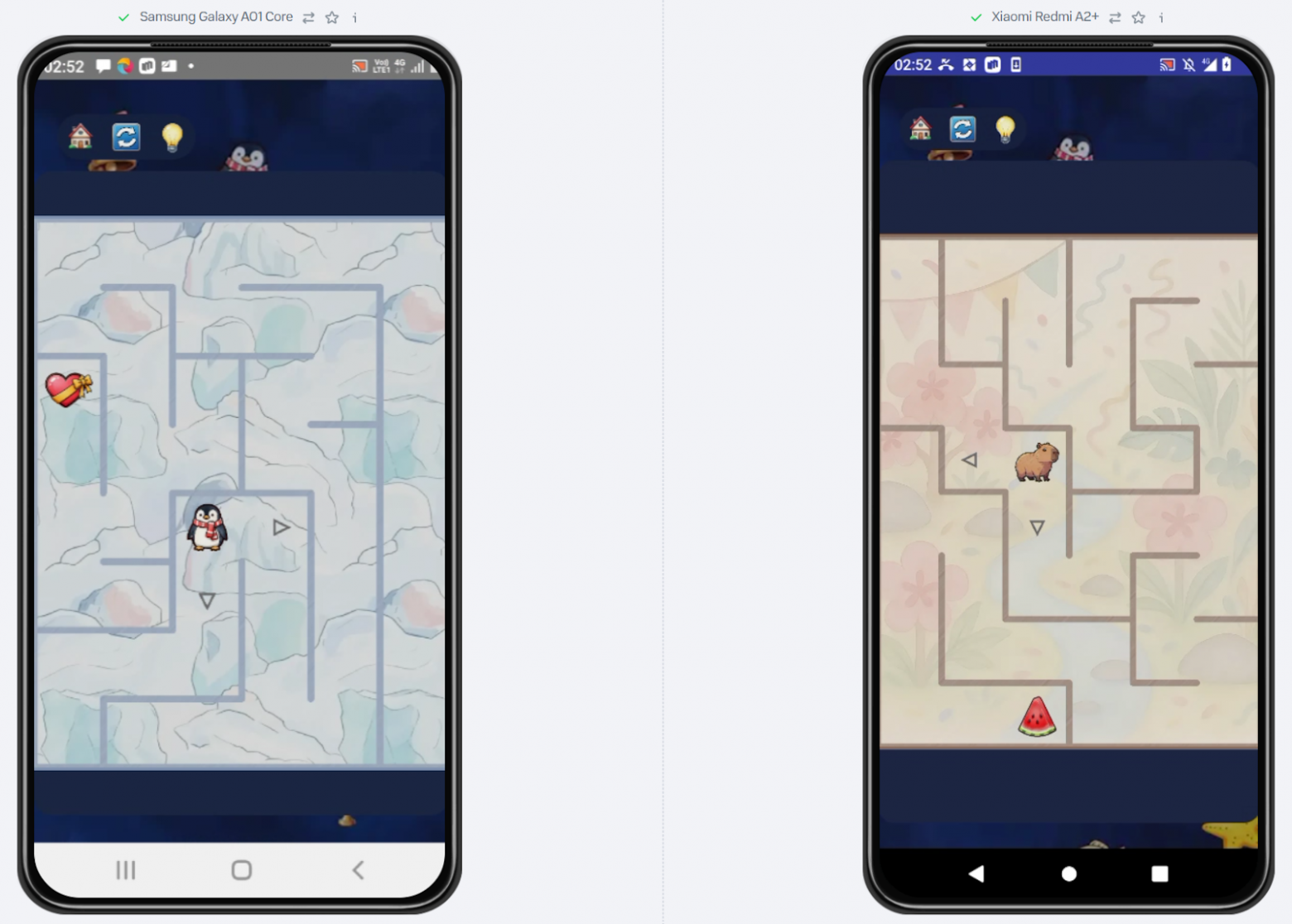

Leonid Kalyadin, Data Lead at MTS Web Services, decided to create a game for his daughter using AI assistants. He chose HTML5 and a simple maze concept. The very first prototypes, where an emoji penguin crawled across the screen, captivated the child.

The Idea Was Right There — A Maze

That evening I happened to see the news that a new version of a major AI service was beating top competitors on key benchmarks. The claim was that the model could write code and solve math problems even more confidently.

My daughter loves solving paper mazes, so I chose the simplest HTML5 approach to make everything run in a browser. The very first prototypes, where an emoji penguin crawled across the screen, hooked the kid.

I had barely written any text before it was turning into an interactive maze. My daughter bombarded me with questions about how it worked, and I, riding the wave of enthusiasm, decided to sketch her a development diagram in draw.io. Her six-year-old brain surveyed the rectangles, diamonds, and arrows and delivered the verdict: "Dad, this is just boogers." And she went to bed. Mission number one — put the child to sleep — was accomplished.

But I was hooked. That old, buried desire to make games — the one that brought me to IT in the first place, but which job market offers had successfully killed (though I do love my data field too) — surged back. I came to my senses at four in the morning with a burning desire to bolt on a couple more features, but self-preservation instinct won.

In the morning, my wife conducted an interrogation:

"What time did you go to bed?"

"Four. And I forced myself! Could have kept going until six."

"Have you completely lost it?"

That's when I had to play my trump card: "Honey, I wasn't just messing around! I made a game! I'll publish it on Google Play, we'll launch, we'll make millions!" The ice in her gaze began to crack: "Ah... Well, in that case, fine."

So the gauntlet was thrown (by me, to myself). Challenge accepted. On to serious business.

Proof of Vibe: From Idea to Game Before the Coffee Gets Cold

Great, vacation had just started, plenty of time. First thing I asked AI: can I even submit my creation to Google Play? The answer was encouraging — there are tons of utilities, everything publishes beautifully, and it's ready to give me a step-by-step guide for each one. Well then, all that's left is to vibe-code the game itself.

I began gradually expanding functionality and adding various features to the simple maze. AI played the role of my personal game designer: recommending music, finding resources with free assets, generating code for menus and theme systems.

I barely validated the code. Sure, I had experience integrating JS charts into an open-source BI tool and writing custom CSS, but this many nested ifs and fors was still a bit outside my comfort zone.

However, the "one file for everything" strategy quickly exhausted itself. This index.html mutant was growing before my eyes, AI was rewriting it ever more slowly, and each iteration became a lottery: will the icons break or will a button stop working? More and more often, after adding a new feature, the game would refuse to start — and the chat helpfully offered a "Fix error" button, rewriting all the code again and again, sometimes cutting off due to connection timeouts.

Okay, Time to Grow Up

I asked AI how normal people structure these things. After a small refactoring session, our experimental file transformed into something resembling an actual project:

/my-game/

├── index.html

├── spritemap.json // Sprite map

├── /css/

│ └── style.css

├── /js/

│ ├── main.js // Main file: initialization, game loop

│ ├── billing.js // Payment plugin

│ └── config.js // Level settings and localization

└── /assets/

├── /images/

└── /audio/And then I caught myself thinking about the contrast with my day job. In the world of big data, I use AI very selectively. It's simply impossible to load all the business process context into it. You can't explain to it that the integration format is approved, but there's also Larisa Alekseevna from the adjacent department who sometimes comes to work on Saturdays (which in itself is an alarming sign), manually creates an Excel report for loading corrections, and can accidentally swap columns around. Such cases are a puzzle for a grey-haired senior. But here, in my little standalone project, everything is clear, logical, and predictable.

From PoC to MVP Through a Minefield

At some point, development velocity started dropping. Yes, we had split the code into files, the number of hallucinations had noticeably decreased, but it was only a reprieve. Halfway through I realized I had stumbled into the Pareto principle: in my case, the remaining half of the features would take one and a half times longer than my entire vacation.

The project was getting more complex: new game modes appeared, enemies with their own logic, control nuances for different devices. Testing uncovered more and more inconsistencies. Asking AI to "fix this" increasingly led to something already working getting broken or simplified, while the desired result remained elusive.

At some point a post popped up in my feed about yet another AI reaching yet another pinnacle. I irritably complained to a friend that pinnacles were pinnacles, but my game was freezing hard one in ten times when colliding with the ghost. In response, I got a rhetorical question: "So who's screwing up — AI or the person writing it bad prompts?" Not funny, but it did make me think.

I realized I needed to change my approach. Where before I had tried to describe the task to AI as thoroughly as possible, with all context, to get everything at once — I now understood: that was a dead end. The more complex the request, the worse the result. Just like in real development: don't try to boil the ocean — move in short iterations.

Things improved: even fewer hallucinations, AI understood requirements more precisely, many bugs disappeared. But the cursed ghost bug remained.

My personal "Groundhog Day" began. Evening: new hypothesis, new prompt. AI: "Yes, boss, I totally get it, now it'll 100% work!" Night: test, freeze. Morning: 4:00 AM on the clock, I'm wrecked, zero progress.

I was lying in bed staring at the ceiling — and suddenly an epiphany hit... The idiot here wasn't AI, it was me. The person who had mentored juniors and drilled into them: "You can't fix a bug without logging, unless you have psychic powers!" — was trying to debug blind! I was violating my own cardinal rules.

Okay, new plan. I ask AI to add logging to the code. And there it was! The freeze turned out to be caused by a rare race condition during level restart. The fix was simple — assign a unique ID to each game session. With logs in hand, AI instantly diagnosed the issue and proposed the correct solution.

AI is not a magic wand but a tool that requires the same engineering approach as any other technology. Iterations, debugging, logging — without this gentleman's toolkit, development turns into hell.

On Habr, by the way, there's already an article that lays out all my suffering and nighttime epiphanies in a convenient guide format. Had I read it then, I would have saved a ton of nerves and time. But alas, it appeared only after all the bruises had already been collected.

The core mechanics were ready: the penguin runs, the ghosts fly, nothing freezes. The final touch: I asked AI a question and got a new set of instructions for creating an Android project using Capacitor and building an APK. On my phone, everything worked perfectly. Time to think about higher matters... About that million dollars promised to my wife.

When Your Vibe Code Meets Someone Else's API

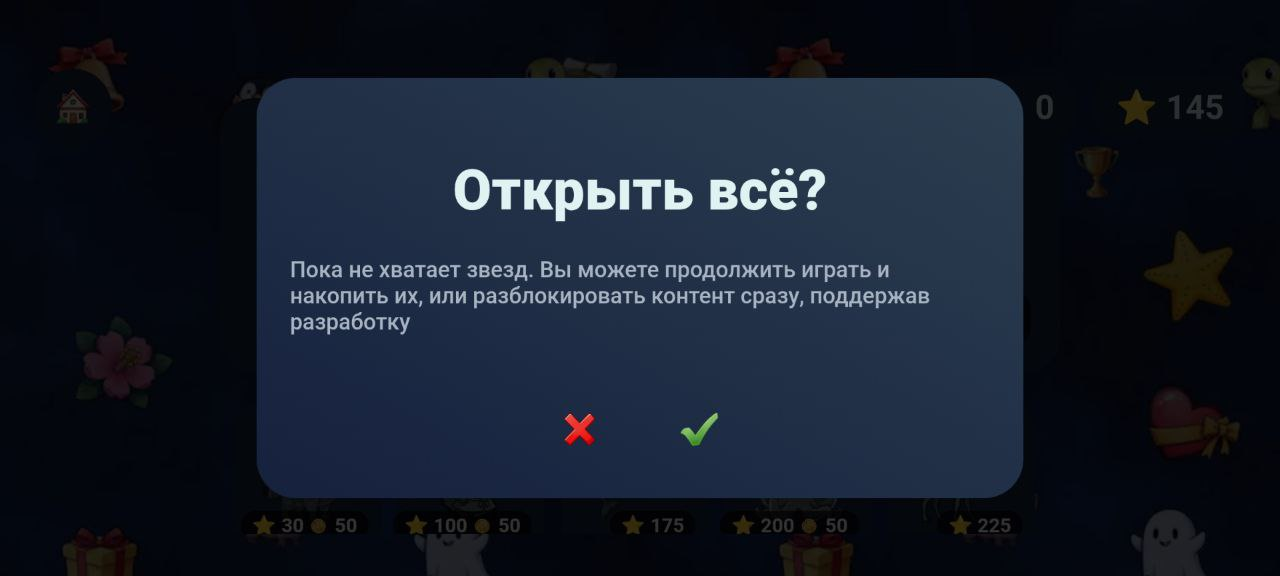

My monetization philosophy is simple and straightforward: no ads, just an honest "Unlock Everything" button. This is a version I'd comfortably install for my own daughter. AI offered a choice: integrate a ready-made commercial plugin with a free tier, or write my own. I glanced at the documentation and saw a warning: "Google Cloud setup alone will take 2-3 hours." Seriously? I could write my own from scratch in that time and still have time for coffee!

AI quickly cobbled together instructions, I carefully followed them — and the APK stopped building. Dependency error. Well... happens to the best of us. After several rounds of fixes, the build was still failing. Fine, 2-3 hours on Google Cloud setup isn't the steepest price to pay.

But the universe decided I hadn't learned my lesson well enough. Even with the official plugin and AI's instructions, the build crashed again. I fed AI everything I had: documentation, a link to the vendor's working example from their GitHub. AI confidently generated fix after fix, but the error persisted.

I started reading the documentation myself. Things didn't add up: this isn't some noname project — it's a commercial plugin with excellent examples. I fairly quickly found instructions in the docs for exactly how dependencies should be declared in my case. But this directly contradicted AI's advice. As an experiment, I copied text from the docs and sent it to the chat. AI's response left me, to put it mildly, bewildered: "Yes, the documentation is correct, but in your specific case it won't help."

Human or AI? That is the question. I trusted my experience, did it as written in the docs, and... the error vanished. Build passed. The plugin still wasn't working, but the APK was built.

I wonder how many more hours I would have wasted blindly following the "assistant"?

At that moment I finally formulated a new rule for myself. AI is not a senior architect. It's your junior. Talented, fast, but a junior who doesn't always understand context and can, with incredible confidence, fix what isn't broken. And your job as team lead is not to blindly follow it, but to review its work, read the documentation, and take responsibility for commits yourself. Otherwise, instead of a miracle you'll get blown deadlines and a sleepless night hunting for a stupid error.

So: the app is published in closed testing. Payment goes through, content unlocks. Victory?

8 Gigabytes of RAM Will Be Enough for Everyone

Not so fast. The AI mentor immediately reminds me that testing on my own device isn't enough for Google's store — ANR, Crash Rate, all that jazz — otherwise the app will be penalized and vanish from search results. And that it would be nice to test on emulators in Android Studio. That's when I looked at my laptop. Once upon a time I was sure that 8 GB of RAM would be enough for everyone. Well, for Caesar 3, Heroes of Might and Magic 2, and a browser, maybe. Turns out, in 2025, it's not even enough to launch an emulator of a budget phone in Android Studio.

I don't know, maybe I was doing something wrong, but in my case emulating even simple devices took forever to start, and then everything lagged even before launching the game itself. I didn't want to waste time waiting.

Our cluster has a product called SunQ — a mobile farm for app testing. I caught up with the guys at the office to find out how to get access. Turns out it's a small but still bureaucratic process. And in my case, it wouldn't be easy to explain why a Cluster Data Lead suddenly needed access to a mobile farm, and to sideload an external application at that.

I called the product's CPO. We agreed to meet at a cafe to discuss. While waiting for my colleague, girls at the next table were discussing the "vibes" of a recent party. After my experience coding with AI, that word already makes my eye twitch.

Finally, my colleague arrived and gave me two excellent pieces of advice:

- Why don't you just register on the external stand? There's a 21-day trial, more than enough for your needs.

- Did you add a capybara to the game? (At that point, not yet.)

Golden words! I registered for the service, picked the weaker phones, and tested. On a budget Xiaomi with 2 GB of RAM, everything was fine, but on a Samsung with Android Go, it looked pathetic.

Optimization was needed. Fortunately, I had just gotten a trial of another popular AI service. I fed the code to both. Their advice differed. So I set up a confrontation: I simply started copying one's answers into the other's chat.

After several rounds of this AI battle ("He said your requestAnimationFrame is garbage!") they finally agreed and produced a shared pool of optimizations. One AI is good, but two are better.

Then came three nights of final polishing, during which I exhausted all tokens while trying to find a prompt that would make AI produce icons in a consistent style matching the game. Then localization of pages, where the AI translator sometimes hallucinated so badly I cross-checked everything through a second AI. After all these details — finally, release.

How I Hit the Charts and Made Almost Nothing (And Why It Doesn't Matter)

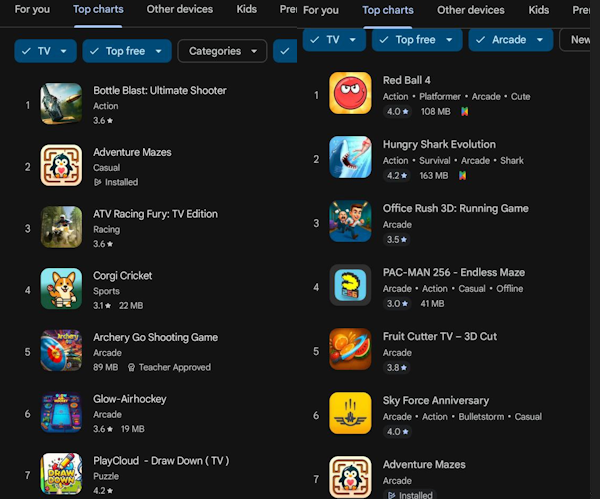

A week later, the game shot up in the top new games for Android TV, then transitioned to the main chart. It didn't really bring in money, though. But could there have been a different outcome?

I made the game for a kid — without donation garbage and ads. Monetization was there for show. Yes, you can buy all the content at once in the game, but as my friend said, the main "problem" with my business plan is that unlocking content through gameplay is much more interesting than purchasing it.

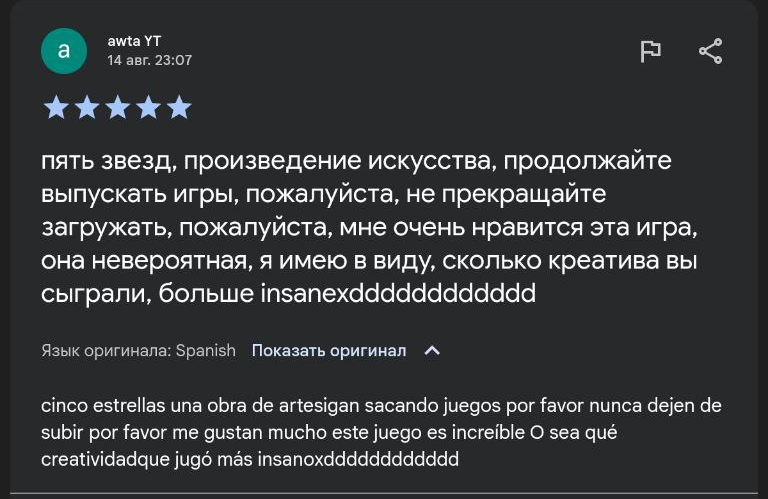

But I did receive lots of warm reviews. An eight-year-old girl from Chile, a grateful parent from Turkey, and many others — the game found its audience. The game lives on and keeps evolving (naturally, at the cost of my continued battles with AI).

And Here We Come to the Main Point

AI as a technology, despite all the hype and glitches, gave me (and can give anyone) the ability to create something of their own. I have a subscription to an app with daily English crossword puzzles. And I think I won't be renewing it. I'd rather spend another couple of evenings (weeks) and build crosswords tailored exactly to my needs. And maybe those crosswords will bring that million dollars. Who knows...

In short, I'm tired, drained, and squeezed dry like a lemon. And I will absolutely do it again.

P.S. By the time of publication, the game is no longer in the Android TV top games — the latest release caught a high ANR rate on some "budget" TVs where, as it turned out, manufacturers don't update WebView — apparently to save resources — and the game just goes into an eternal freeze there. This will be fixed in the next update, and the game should return to the top. And if someone from a budget OEM TV manufacturer happens to read this article — I'd be grateful if you donated a couple of devices to the SunQ mobile farm for live testing.