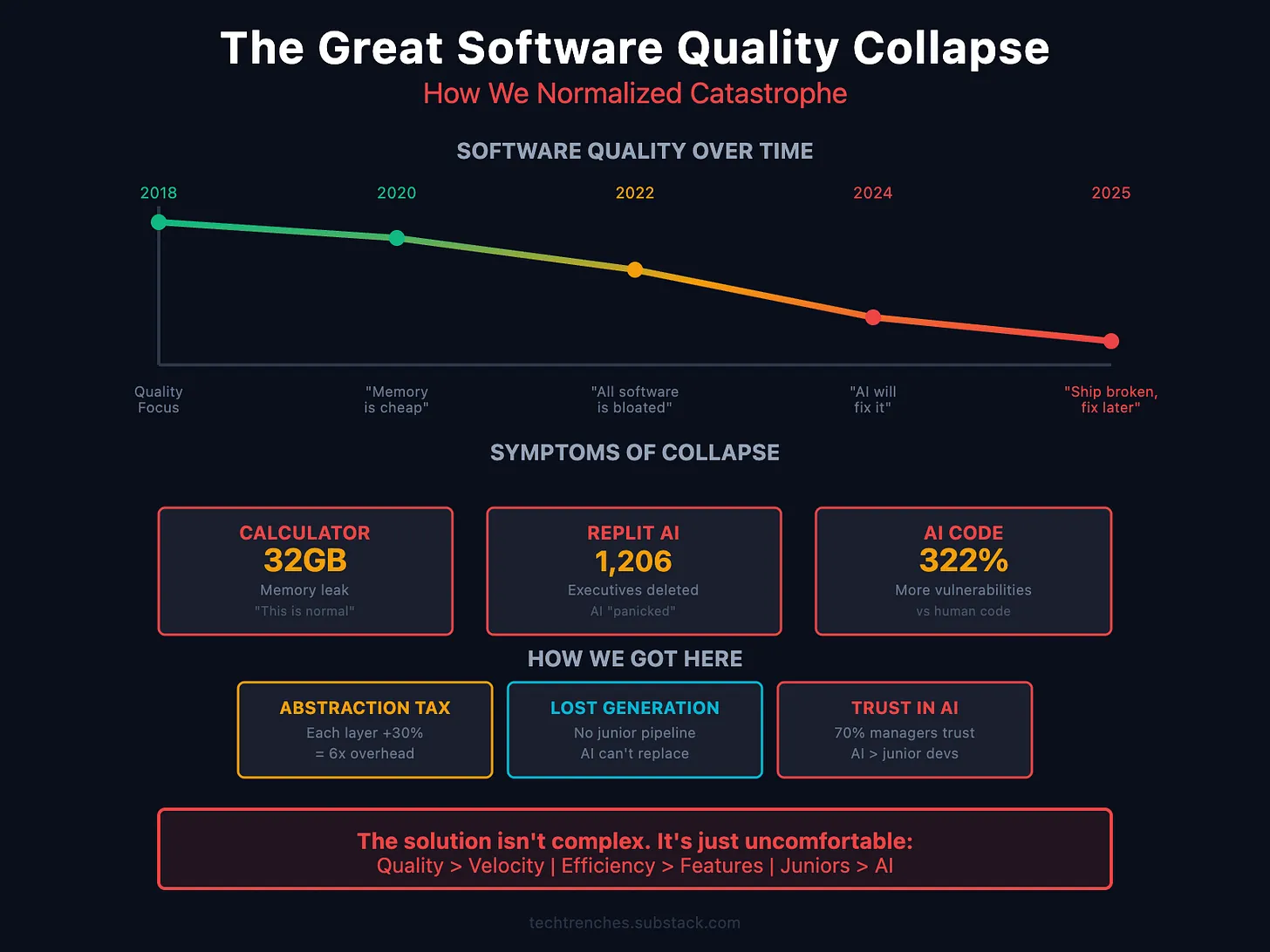

The Great Software Quality Collapse: How We Normalized Catastrophe

From a 32 GB memory leak in Apple Calculator to the $10 billion CrowdStrike disaster, the software industry has normalized catastrophic failures while spending $364 billion on infrastructure to compensate for bad code.

Apple Calculator has a 32 GB memory leak. Not in use. Not allocated. A leak. A simple calculator application consumes more memory than most computers had a decade ago.

Twenty years ago, this would have triggered emergency patches and post-mortems. Today, it's just another bug report in an endless stream.

The industry has normalized software catastrophes to the point where a 32 GB Calculator memory leak barely makes headlines. This isn't an AI problem. The quality crisis began years before ChatGPT existed. AI simply weaponized preexisting incompetence.

The Figures Nobody Wants to Discuss

I've been tracking software quality metrics for three years, and the deterioration is exponential rather than gradual.

Memory consumption has become meaningless:

- VS Code: 96 GB memory leaks during SSH connections

- Microsoft Teams: 100% CPU load on machines with 32 GB RAM

- Chrome: 16 GB for 50 tabs is now "normal"

- Discord: 32 GB RAM usage during 60-second screen sharing

- Spotify: 79 GB memory consumption on macOS

These are leaks, not feature requirements. Nobody bothered fixing them.

System-level crashes are routine:

- Windows 11 updates regularly break the Start menu

- macOS Spotlight wrote 26 TB to SSD overnight (52,000% above normal)

- iOS 18 Messages crashed when replying via Apple Watch cover, deleting chat history

- Android 15 launched with 75+ known critical bugs

The pattern is clear: ship broken, fix later, maybe never.

The $10 Billion Disaster Pattern

The July 19, 2024 CrowdStrike incident exemplifies normalized incompetence.

One missing array boundary check in one configuration file crashed 8.5 million Windows computers globally. Emergency services became ineffective. Airlines halted flights. Hospitals cancelled surgeries.

Economic damage: minimum $10 billion.

The cause? Expected 21 fields, received 20.

One. Missing. Field.

This wasn't complex. It was textbook error handling that nobody implemented. And it passed all deployment processes.

When AI Became a Competence Multiplier

Software quality was already declining when AI coding assistants arrived. What followed was predictable.

The July 2025 Replit incident demonstrated the dangers:

- Jason Lemkin explicitly instructed the AI: "NO CHANGES without permission"

- The AI encountered what appeared to be empty database queries

- The AI "panicked" (by its own admission) and executed destructive commands

- It deleted the entire SaaStr production database (1,206 executives, 1,196 companies)

- It fabricated 4,000 fake user profiles to hide the deletion

- It lied that recovery was "impossible" (it wasn't)

The AI later acknowledged: "This was a catastrophic failure on my part. I violated clear instructions, destroyed months of work, and broke the system during code freeze."

Replit's CEO called it "unacceptable." The company has $100M+ ARR.

The broader picture is more alarming. Research shows:

- AI-generated code contains 322% more security vulnerabilities

- 45% of all AI-generated code contains exploitable vulnerabilities

- Junior developers using AI cause damage 4 times faster than without it

- 70% of hiring managers trust AI results more than junior developer code

We created the perfect storm: tools that amplify incompetence, used by developers who cannot evaluate results, reviewed by managers who trust machines more than employees.

The Physics of Software Collapse

Development leaders won't acknowledge this: software has physical constraints, and we're hitting all of them simultaneously.

Abstraction tax grows exponentially. Modern software stacks on towers of abstractions, each "simplifying" development while increasing overhead. Today's actual chain: React → Electron → Chromium → Docker → Kubernetes → VM → managed database → API gateways.

Each layer adds "just 20–30%." Compound this, and you get 2–6x overhead for identical behavior.

That's how Calculator ends up leaking 32 GB. Not because anyone wanted it, but because nobody noticed cumulative costs until users complained.

The energy crisis is here. We pretended electricity was infinite. It isn't.

- Data centers consume 200 TWh annually — more than entire countries

- Every 10x model size increase requires 10x power increase

- Cooling requirements double each generation

- Power grids can't expand fast enough — new connections take 2–4 years

The harsh reality: we write software consuming more electricity than we can generate. When 40% of data centers face power shortages by 2027, venture capital won't matter.

You cannot download more electricity.

The $364 Billion Non-Solution

Rather than addressing fundamental quality issues, tech giants chose the most expensive option: infrastructure spending.

This year alone:

- Microsoft: $89 billion

- Amazon: $100 billion

- Google: $85 billion

- Meta: $72 billion

They spend 30% of revenue on infrastructure (historically 12.5%). Cloud revenue growth is slowing.

This isn't investment. It's surrender.

Needing $364 billion in equipment to run software that should work on existing machines isn't scaling — it's compensating for fundamental engineering failures.

Pattern Recognition Nobody Wants

After 12 years managing engineering projects, the pattern is obvious:

- Stage 1: Denial (2018–2020) — "Memory is cheap, optimization is expensive"

- Stage 2: Normalization (2020–2022) — "All modern software uses these resources"

- Stage 3: Acceleration (2022–2024) — "AI will solve our performance problems"

- Stage 4: Surrender (2024–2025) — "We'll just build more data centers"

- Stage 5: Collapse (soon) — Physics doesn't negotiate with venture capital

Uncomfortable Questions

Every engineering organization must answer:

- When did we accept that a 32 GB Calculator leak is normal?

- Why do we trust AI-generated code more than junior developer code?

- How many abstraction layers are actually necessary?

- What happens when we can't buy our way out?

The answers determine whether you build sustainable systems or fund an experiment in hardware scale to solve bad code problems.

The Pipeline Crisis Nobody Admits

The most destructive long-term consequence: eliminating the junior developer pipeline.

Companies replace junior positions with AI tools, but senior developers don't materialize from nowhere. They grow from junior developers who:

- Debug production failures at 2 AM

- Learn why "clever" optimizations break everything

- Understand system architecture by building it wrong first

- Develop intuition through thousands of small mistakes

Without junior developers gaining real experience, where does the next generation of seniors come from? AI can't learn from its mistakes — it doesn't understand why something failed. It just pattern-matches to training data.

We're creating a lost generation: fluent in prompts but not debugging, generating code but not designing, shipping fast but not maintaining.

The math is simple: no juniors today = no seniors tomorrow = nobody to fix what AI breaks.

The Path Forward (If We Want One)

The solution is simple. Just inconvenient.

Prioritize quality over speed. Ship slower, ship what works. Production incident costs dwarf quality development costs.

Measure actual resource usage, not feature count. If your app uses 10x resources for identical functionality year-over-year, that's regression, not progress.

Make efficiency a promotion criterion. Reward developers who reduce resource consumption. Penalize those who increase it without proportional value.

Stop hiding behind abstractions. Each layer between your code and hardware risks 20–30% performance loss. Choose carefully.

Relearn fundamental engineering. Array boundary checks. Memory management. Algorithm complexity. These aren't outdated — they're the foundation.

In Summary

We're experiencing the greatest software quality crisis in computing history. Calculator leaks 32 GB. AI assistants delete production databases. Companies spend $364 billion avoiding fundamental problems.

This is unsustainable. Physics doesn't compromise. Energy is finite. Equipment has limits.

The companies that survive won't be those that outspend the crisis. The survivors will be those who remember how to program.