Most People Don't Care About Software Quality

An essay exploring why mainstream users are indifferent to software bloat, poor design, and degrading quality -- drawing parallels with photography, cinema, and consumer culture to argue that only professionals notice the decline.

On Habr you can sometimes hear complaints about the degradation of web design and interfaces for more "primitive" users, the enshittification of code hosting platforms, software bloat, and other signs of a worsening world. It seems like every useful website on the internet eventually turns into garbage with infinite scrolling, dopamine hooks, and monetization.

But this degradation has a natural cause, a very simple one. The thing is, most people fundamentally don't care.

Where Did the Small Programs Go?

Someone might ask: where did the small programs go? After all, fully functional applications of 100-200 KB used to be the norm -- what happened to them? Frameworks are largely to blame for the bloating of software: Electron, React Native, and others.

And why did frameworks become popular? The reason was the emergence of smartphones. Developers then needed to write multiple versions of native applications for different operating systems, and Electron became the solution to this problem.

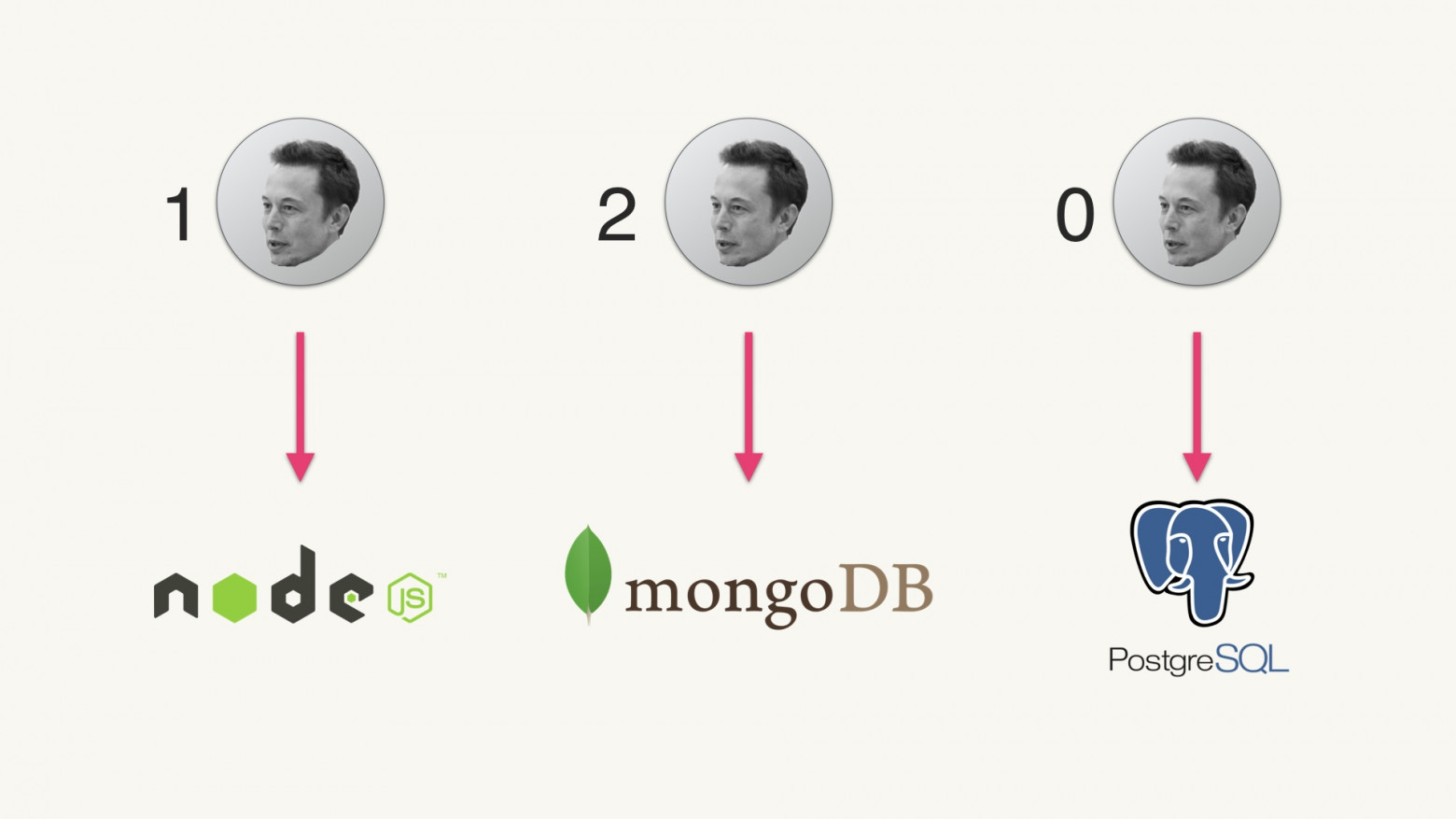

As written in the history of Electron, the real breakthrough was combining Node.js and Chromium: "This solution allowed JavaScript to interact directly with native OS capabilities while rendering a browser UI, thereby providing the highly desired combination for many web developers."

This architecture made it easier to release a desktop version of a web application, also providing automatic updates and simplifying the UI development process. But it also spawned well-known problems:

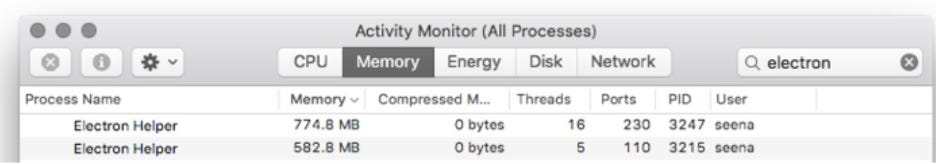

- Program bloat, massive memory and CPU consumption. Each Electron application contains its own instance of Chromium.

- Reduced performance

- Decreased stability

And that's how we arrived at the state we're in now.

Fortunately, for most ordinary users all these problems haven't become critical. They got used to unstable application behavior, and accepted hardware slowdowns as a given. Most average users simply don't understand that this is an abnormal situation, so they don't complain.

Long-Term Software

In our time, software is often provided as a service that is deployed "continuously" (CD), wrapped in countless automated tests (CI, continuous integration). This approach allows one to hope that the new version will at least somehow work.

However, there is a huge world where continuous changes are considered evil. On the contrary, people there value reliability and deterministic program behavior. Software that controls (nuclear) power plants, pacemakers, airplanes, bridges, heavy machinery. In short, where safety matters. Such long-term software is developed decades in advance. All changes are carefully documented and announced in advance.

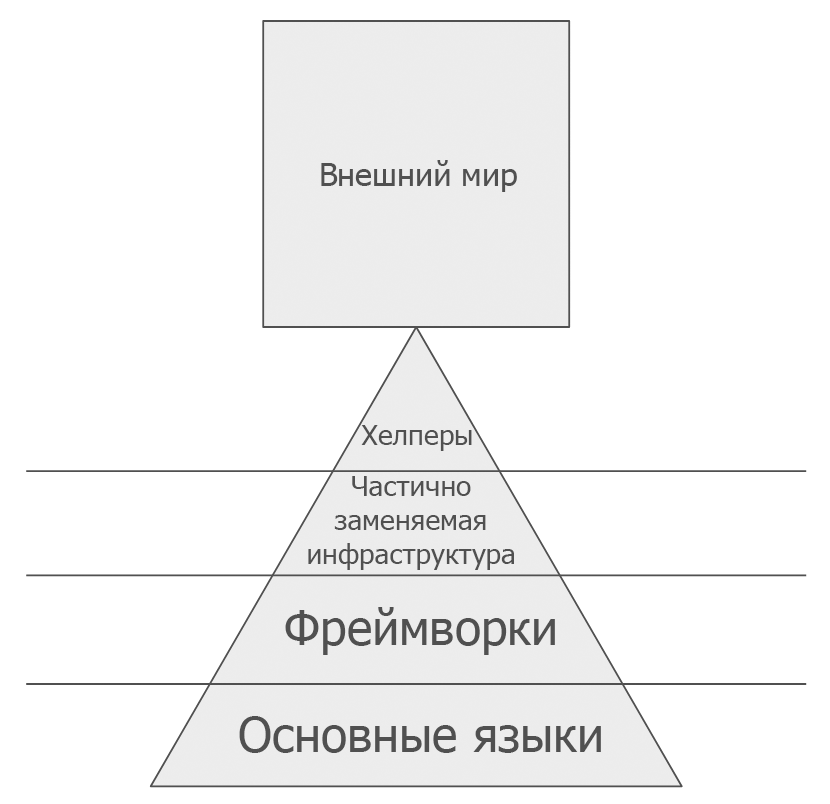

For long-term software, it's very important to minimize the number of dependencies, because each one can "go stale" over time: a new version will be released, the maintainer will change (introducing a paid subscription), or support will cease entirely. At a high level of abstraction, one can speak of four levels of dependencies: starting from the programming language and ending with working libraries and other easily replaceable tools (helpers):

You need to carefully consider which dependencies will remain relevant in 10-20 years (in accordance with the Lindy effect), and then get rid of unnecessary dependencies and services that the program uses. For example, cloud services. All of this can disappear at any moment.

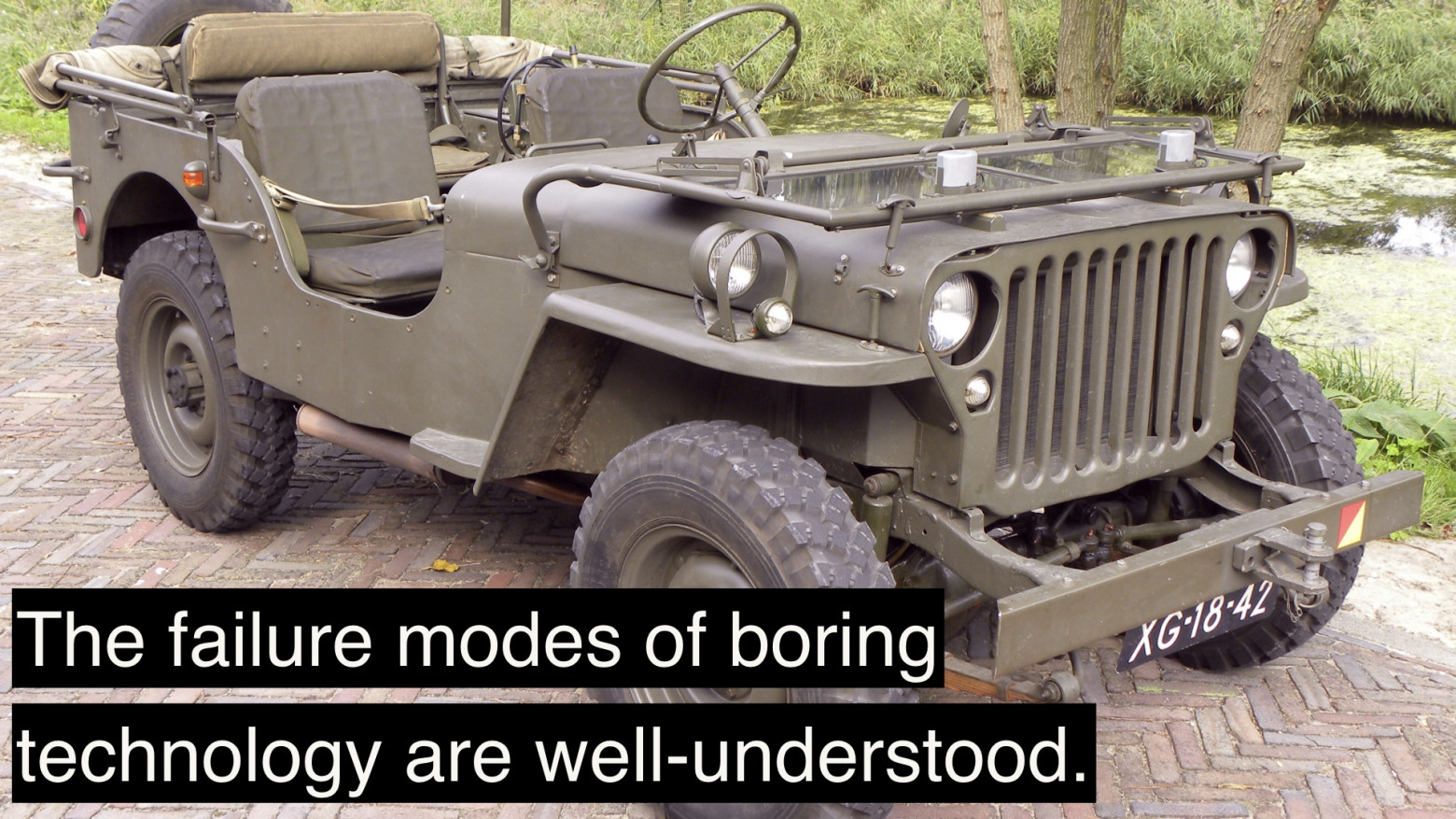

For maximum software longevity, it's recommended to choose the most boring technologies, write the most boring and simple code, like this car:

To live long, software should also be well documented, and the source code should be published. This is also a certain guarantee of software quality -- many companies need months or years to prepare code for publication. This means they clean it of unnecessary things, refactor and simplify it to produce clean code that they're not ashamed to publish. Thus, the very act of open publication improves code quality.

Long-term software is the opposite of consumer software for the mass market. Some people enjoy creating precisely such reliable, predictable, and stable programs. But if we're making consumer software, the rules are different. The mass user doesn't care as much about program quality.

Quality Is Not the Main Thing?

As sad as it may be, the mass consumer doesn't care about program quality. This applies not only to software but to other areas of life as well. For example, take photography. Only very rare users will spend time reading textbooks on photography and composition, and then construct frames according to all the rules of harmony, with a grid, manually adjusting white balance and exposure, to ultimately get a perfect, beautiful shot.

No, most of us just point the camera at the subject and press the button, not particularly worrying about quality. The shot might be out of focus, with unbalanced composition, cluttered background, incorrect centering, imperfect lighting (underexposure or overexposure with harsh shadows), and other flaws. Doesn't matter. Snapped it, posted it -- and moved on.

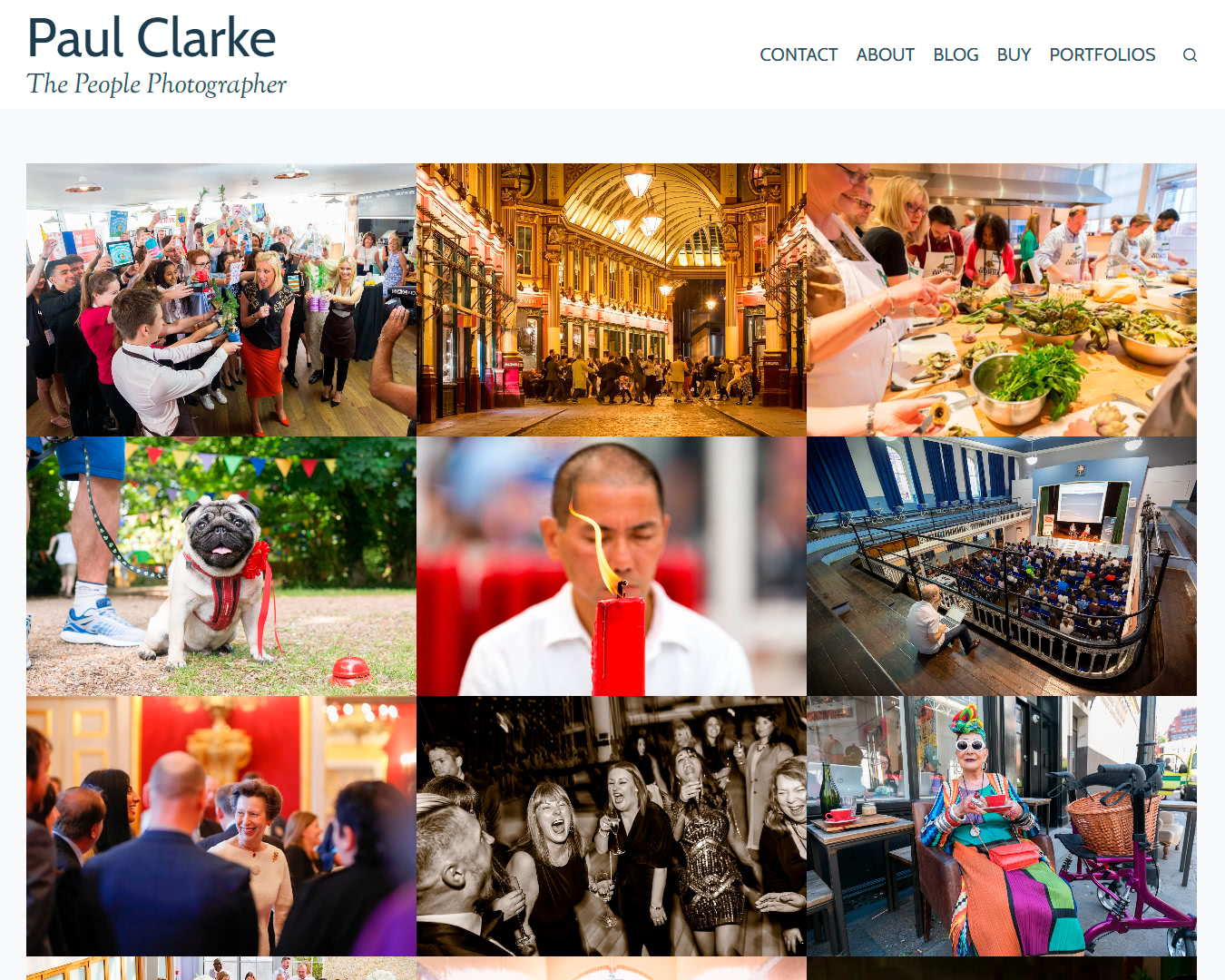

Ordinary people won't analyze and discuss the shortcomings of every photograph -- they won't even notice them. That's the domain of professional photographers. Similarly, a professional designer will immediately notice flaws on other people's websites: lack of keyboard navigation, poor kerning, absence of whitespace around illustrations, and dozens of other shortcomings. But 99% of users simply don't care because it doesn't affect them in any way.

Some designers chase perfection that will never be noticed by non-professionals. They can take pride in their craft, but most people are physically unable to notice the difference between good and bad design.

There's an excellent essay by Will Tavlin called "Casual Viewing: Why Netflix Looks Like That." In it, the author rightly criticizes Netflix's model of producing enormous quantities of low-quality content for an undemanding audience that watches movies in the background. For example, one of Netflix's requirements states that the main character on screen must always verbally announce what they're about to do.

Creative people are horrified by the aesthetics, bad scripts, and complete absence of creative talent. All films and series seem mediocre, and Netflix's principles go against traditional techniques of theatrical art and cinema ("Show, don't tell"). But ordinary people couldn't care less. They consume content as if nothing is wrong.

Specialists in interfaces, design, and ergonomics see flaws everywhere around them -- even in the interface of an ordinary coffee machine. Different models have completely different control logics. Buttons, sensors, displays -- everything is arranged differently, without a single standard.

The same goes for everything. Professionals see the flaws, and ordinary people don't. They don't understand the subject, don't know the context, and don't immerse themselves in the professional field of creating these objects. That's why they're fairly relaxed about the low quality of everything around them: from food products to the design of household appliances.

This applies to software too. Professional developers immediately see the shortcomings: bloated software, inflated mobile applications, sluggish 2D graphics on the most powerful CPUs of modern smartphones, which in terms of performance can rival the supercomputers of the past. But ordinary people don't see any of this.

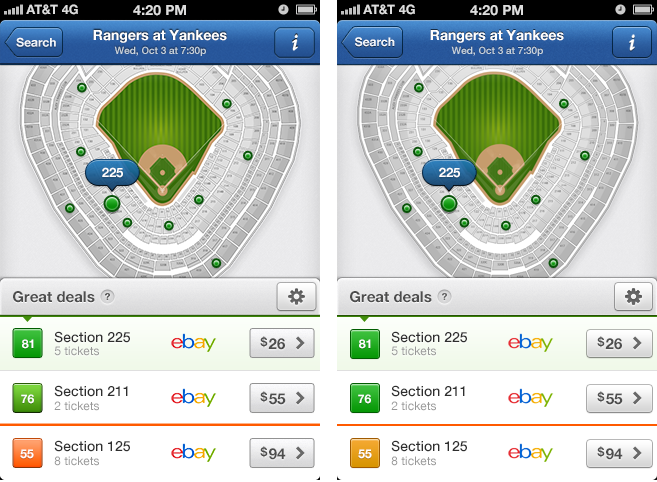

The professional competencies of developers or designers are reflected in perfectionism, extraordinary attention to detail. For example, designers study the pixel grid on the displays of different mobile devices to calculate optimal subpixel rendering:

Audiophiles discuss the insane compression of sound in modern music, leading to "loudness wars" and dynamic range compression. They complain about MP3 compression quality and can't listen to music through mediocre headphones or in a room without sound-absorbing walls. But for ordinary people, this means nothing. We just listen.

Likewise, ordinary people just launch programs -- to simply do what they need, they press application buttons without any thought that these buttons are not quite conveniently placed.

Perhaps professionals shouldn't take the enshittification of everything around them so much to heart? Maybe this is a normal trend for the modern world, where the majority rules?

Most people don't actually want to spend cognitive energy on understanding subtext. For example, the political subtext of superhero movies. People often watch films "in the background," just for the pleasure of gunfire and so on.

If the public accepts a mediocre mass product, then why bother? From a business perspective, it's unprofitable to spend extra resources on bringing it to perfection. It's enough to produce a "minimum viable product" that will satisfy 99% of consumers.

This isn't about most people being undemanding or poorly educated. No, it's simply that all of us are amateurs in the absolute majority of fields. In everything where we're not specialists.

That's why most people don't care about software quality.

To be more precise, people don't actually not care, but they usually lack the competencies, attention, and time to distinguish a quality product from a poor one. So the result is predictable. The majority eats tasteless vegetables and fruits from supermarkets, watches stupid movies, launches gigabyte-sized applications -- and lives their happy lives without worrying about anything. And this is an endless process. People get used to everything, so the quality of goods/software/mass culture gradually degrades naturally. Movies get worse, food becomes lower quality, and software becomes more buggy -- perhaps all of this happens for natural reasons.

Periodic complaints from professionals on this topic are somewhat reminiscent of old Abe Simpson: