Real LLM Usage Logs Over 7 Months for 527 Employees — What People Actually Do with LLMs at Work and What's Wrong with Them

An analysis of real-world LLM usage data across 527 employees over 7 months, revealing that API access is 8.5x cheaper than subscriptions, image generation consumes 64% of the budget, and the ROI on corporate AI deployment reaches 2800%.

I was the one who implemented all of this, and we agreed that anonymized log statistics could be shared publicly. These are direct transaction counts. Not analyst forecasts, not vendor presentations — but actual real logs.

The company decided to get ahead of the chaos and give all employees a proper service, try every model on the market, and generally see what it would yield in terms of productivity gains and so on.

They were choosing between a subscription and a pay-per-token model, and fortunately chose the latter.

Because the average user uses LLMs far differently than you might think. For the record, major models report user counts but carefully hide request volumes and traffic. Because there's extremely little of it.

Jakob Nielsen estimated that only 20% of the population can properly formulate a prompt. They try a couple of times and leave.

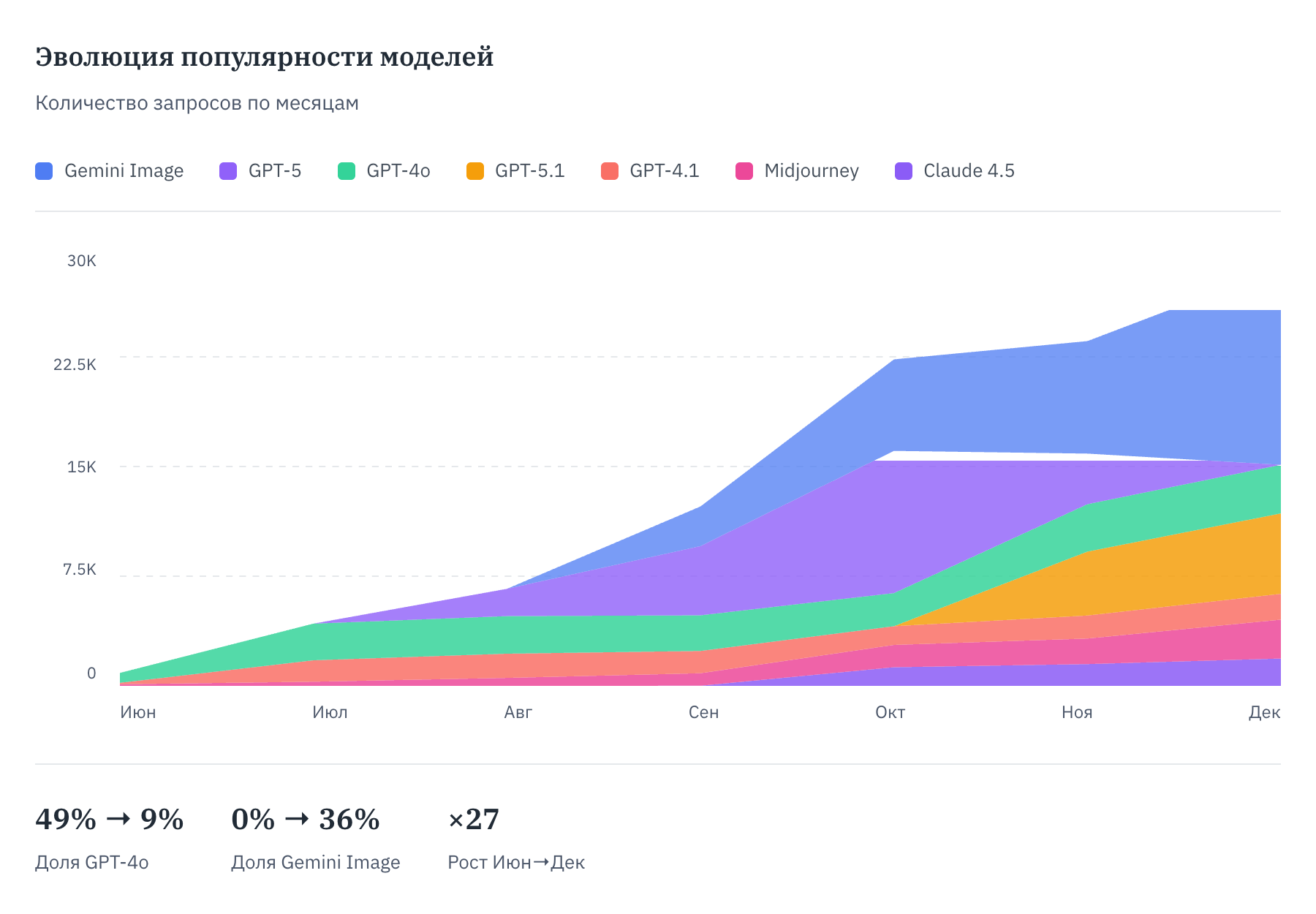

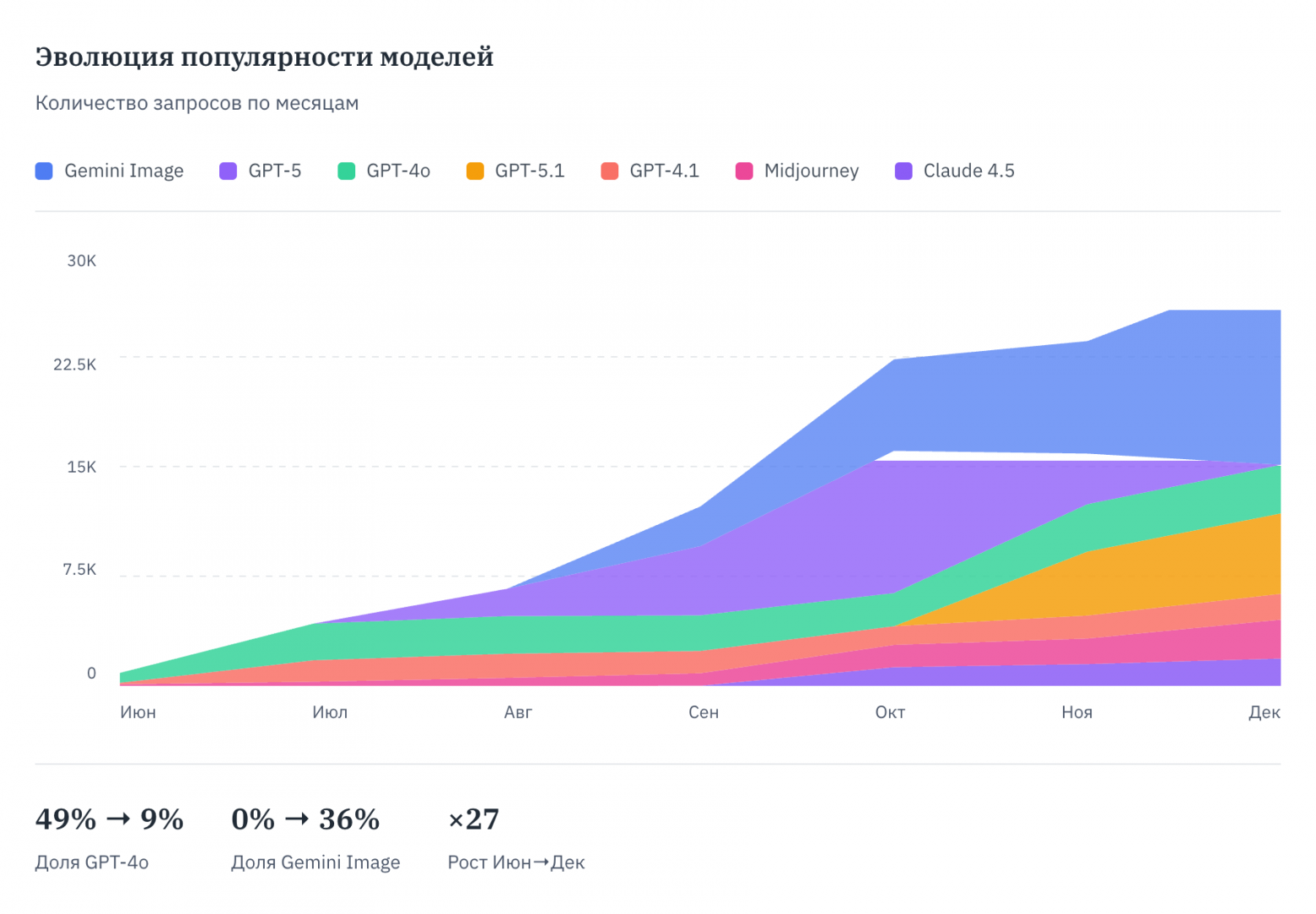

In short, over 7 months from June to December 2025:

- 416 out of 527 users tried it at least once

- 122,346 requests (averaging 42 requests per user per month)

- $6,851 in expenses (535,000 rubles, 184 rubles/month per active user)

- If they had bought $20 ChatGPT subscriptions, they would have gotten the same thing for 8.5 times more.

We deployed an AI aggregator, and it included image generation. 64% of the budget went to image generation.

If we count only LLM usage, including SOTA models like Gemini 3 Pro Preview, the latest GPT, Gemini, and the latest Anthropic models — it would have been just 62 rubles per month per user.

Those who understood what the model was for kept coming back consistently.

So come on in — I'll tell you what real people, when not beaten with a stick, actually do with LLMs in the real world. In practice.

AI Is Already Deployed at Your Company

Sometimes you know it, sometimes you don't, but 50–60% of your employees are already using neural networks at work. Daily. The question isn't whether to deploy. The question is whether you're controlling it or not. 71% of office workers use AI without IT approval. 38% share confidential company data with public AI services.

Yes, they will leak all your data to OpenAI. It's their problem!

Banning AI is impossible (46% of employees will continue using it even with an explicit ban). The only way to control it is to provide official access and monitor usage.

That's exactly when the decision was made to get ahead of the chaos.

Key Results from Our 7-Month Experiment

If you want to explore the data yourself — there's a page with interactive charts. 16 visualizations, from hourly activity patterns to the Pareto curve. You can get lost in it.

Where Does 62 Rubles per Month per User (or 184 Rubles per Month) Come From?

85% of companies get their AI spending forecasts wrong by more than 10%. Usually overestimating.

Three main reasons:

1. ChatGPT Plus costs $20 per month per person. For 400 employees — $8,000/month, $96,000/year. This client works through the API. Per person, it comes out to $2.35/month. The difference is 8.5 times. Why? A subscription is an all-you-can-eat buffet. You pay for the ability to eat endlessly, even if you only eat one plate. API means you pay for each plate individually. If an employee makes 50 requests per month, you pay for 50. If 500, then for 500. At what volume does a subscription become more cost-effective? At 53 million tokens per month per person for GPT-4o-mini. That's roughly 40,000 pages of text. Per month. Per employee. Realistic? No. Unless you're a proofreader or technical translator — but they specifically need non-mini models.

2. One-third of requests consume two-thirds of the budget. Image generation is 3.5 times more expensive than text. 74% of employees generated images at least once — 308 out of 416. This isn't "the design department." This is nearly everyone. Including accounting. Why accounting needs images is a separate question. According to McKinsey, only 35% of organizations use AI for images. Our 74% is double the market average. People got a taste for it. If you think images are a niche function for designers, you're wrong.

GPT Image costs $0.44 per request. Gemini Image costs $0.053. An 8x difference. Midjourney is $0.16. In September, the company switched from GPT Image to Gemini. Quietly, without fanfare. Migration took one day. 30,599 requests through Gemini over 4 months. If they had stayed on GPT, they would have paid $13,558. They paid $1,621. Savings: $11,936.

Average spend per user in September: $6.47. In October: $3.67. Dropped by half in one month. People generated the same number of images — each one just became 8 times cheaper.

Quality for business tasks is comparable. GPT is sometimes better for text in images, but that's rarely needed. If your team has many people — budget for images. And choose a cheap provider right away.

3. 20% of users generate 79.4% of expenses. Pareto's principle works with percentage-point accuracy. The top 10 users (2.4% of all) spent $1,910 — 20% of the entire budget. The leader — let's call them "Azure Thrush" — spent $308 over 7 months, making 3,578 requests. Meanwhile, "Mint Badger" made 2,757 requests but spent only $139. Because one generates images and the other generates text.

Everyone Calculates Wrong — Everything Is 5x Cheaper

95% of AI pilots show no measurable impact on P&L — MIT, 2025. 42% of companies abandoned most AI initiatives in 2025. Why? Because they calculate the cost of deployment, not the cost of usage. They buy enterprise subscriptions for everyone, even though only 20% actively use it.

The correct calculation: API + monitoring + training. Not "how much does a license cost," but "how much does a request cost."

Those Who Tried Don't Quit

In this case, retention is 85%. Those who tried AI don't quit.

This means: if you give access to 100 employees, after six months there won't be 20 active users — there'll be 60–80. The budget will grow. Not because it got more expensive — because people got a taste for it.

This is good for business. Bad for those who didn't budget for growth.

Spreadsheets — The Expectation Gap

Accountants, PMs, analysts — everyone works with spreadsheets. Logical expectation: I'll upload an Excel file to AI and get analysis.

Reality: a person shoves a million-row file into the chat. Nothing works. The person gets frustrated.

Why? A regular chat interface can't work with spreadsheets. For that, you need agents with code interpreter — they run Python, process data in an isolated environment. That's a different product, a different price, different limitations.

Worse yet: some services pretend to analyze a spreadsheet. In reality, they take the first 100 rows and hallucinate conclusions based on them. The user gets confident nonsense.

What to do: explain the limitations upfront. AI is not a replacement for Excel. Yet. Except for Gemini and genuinely small spreadsheets. And progress is already being made.

They almost leaked their accounting data to OpenAI and other aggregators, of course. But there's a nuance — it's not used for training, because there's a rule about that, more on which below.

Image Settings Eat the Budget Unnoticed

GPT Image lets you choose quality and the number of images at once. What do people choose? Maximum quality. Four images at once. Because "what if it comes in handy."

The math: 4 images × $0.25 = $1 per single request. A person does 15–20 iterations until they get what they want. Total: $15–20 per task. When they saw this in the logs, they explained to people how pricing works. Restricted image access to those who didn't need it for work. Problem disappeared.

Bonus: Gemini Image came out, costing 8 times less at comparable quality.

They Always Use the Most Expensive Model for Everything

If you give an employee access to all models, they'll use the most expensive one. For any task. Even for "write a client email."

GPT-5 Mini costs 6 times less than GPT-5. Gemini Flash is 7 times cheaper than Pro.

How many requests went through cheap models? 4,875 out of 123,458. 4 (four!) percent.

Why? Status quo bias. People don't switch what works. Even if the same thing is available right next to it for pennies.

95% of enterprises overpay for AI. This isn't statistics — it's OpenAI's business model.

Solution: make the cheap model the default. The expensive one — on request. Not everyone needs Claude Sonnet 4.5 or GPT-5.2. For most tasks, GPT-5 Mini or Gemini Flash is enough.

Does It Even Pay for Itself?

AI saves 2–5 hours per week per employee — Federal Reserve, BCG, Adecco. Power users save up to 11 hours.

If we apply this data to our case:

- 400 active employees × 3 hours/week × 4 weeks = 4,800 hours/month

- Average salary 80,000 rubles/month = ~460 rubles/hour

- Savings: 4,800 × 460 rubles = 2.2 million rubles/month

- AI expenses: ~77,000 rubles/month

ROI: 2,800%. Per month.

Even if AI saves not 3 hours but 30 minutes per week — it still pays for itself 4–5 times over.

The estimates are probably inflated, because there's no adaptation period factored in, and employees might use the saved time for their own tasks (sending medical test results, consulting a therapist, asking it to draw a meme for a group chat), but the majority of tasks came from their work roles.

Will the Data Definitely Leak to OpenAI?

Will it leak — definitely. Pretty much like all your Google Docs.

Will it be processed there — no, if you work through the API.

Since March 2023, data sent through the API has not been used for training OpenAI models. By default. This applies to Anthropic and Google as well.

It's important to understand the difference:

- Free ChatGPT — data may be used for training (can be disabled in settings)

- API — data is not used for training by default

Logs are stored for up to 30 days for abuse monitoring. Enterprise clients can get Zero Data Retention — then nothing is stored at all.

The account administrator can see the logs and can hold accountable those who use the model for non-work purposes. Or choose not to.

Sanctions — They'll Block Everything

The risk exists. But there are solutions.

In this case, over 7 months — not a single critical outage.

One provider is not a strategy — it's a lottery. Usually everything works. But there are days when even OpenAI delivers only 90–95% successful responses — you can check on their status page. It doesn't happen every day, but for a company, even once is enough.

The gentleman's kit for 2026:

- Direct APIs: OpenAI, Anthropic, Google (Gemini), xAI (Grok)

- Aggregator: OpenRouter — if one provider is down, traffic routes through another

- Backup: Azure OpenAI — same models, different infrastructure

How it works: a request goes to the primary provider. Timeout or error — automatically switches to the next one. The user doesn't notice. Only your monitoring notices. And you at three in the morning.

Regarding Russian models (YandexGPT, GigaChat): this is not a fallback. People come for GPT and Claude. Substituting them with local models is like replacing a BMW with a Lada and saying "well, it drives."

Do You Need a Dedicated Person?

No. You need someone responsible, but it's not a full-time job.

Setting up the system, periodically checking logs, answering questions — that's part of one person's workload, not a separate position.

Full-time will only be needed if you add: regular training for new employees, webinars with best practices, experience sharing between departments. But that's about development, not maintenance.

How to Deploy It Yourself

Don't want to pay for ready-made solutions? Deploy it yourself. Open source is no longer just for red-eyed bearded enthusiasts.

Three options:

Dify (125K stars on GitHub) — a visual builder. RAG, agents, analytics out of the box. Connect your API keys, get a corporate ChatGPT in one evening.

Open WebUI (120K stars) — couldn't be simpler. Docker, LDAP authorization, works offline. Ideal for paranoids and companies where the security guy sleeps with a firewall under his pillow.

LobeChat (70K stars) — the most beautiful interface. 42 model providers, one-click plugins. If employees are used to ChatGPT — they won't notice the difference.

What you need: a server, Docker, provider API keys. Deployment time: a day or two. Time to customize: a week to a month. Time to explain to accounting why this is needed: eternity.

Key Advice

- Calculate by API, not by subscriptions (the difference is 5–10x)

- Budget for growth: retention is 80%+, the number of active users will increase

- Better to set a separate budget for images or separate limits

- Monitor the top 20% of users — they determine the budget

- Look at cost per request, not the total sum

- Provide official access — otherwise shadow AI

58% of Users Receive No Training

This is a mistake. Here's what we did:

- 2 hours of recorded video (prompting, limitations, different models for different tasks)

- 2 weeks to watch at their convenience

- A live one-hour webinar with Q&A

The main lesson: training without testing is a recommendation, not knowledge. A person will still try to shove a million-row spreadsheet in, even if told not to. Because "what if." Same with expensive models and 4 images at once — people learn from their own mistakes, not from other people's videos.

And one more lesson — don't rush the launch. The client insisted on a week of testing before rolling out to everyone. Quote from correspondence: "Connecting a half-baked service will immediately lose user interest and reputation." Corporate users don't forgive glitches. If it didn't work once — they'll never open it again.

Methodology

- Data source: anonymized request logs to an AI service, 122,346 records

- Period: June – December 2025 (7 full months)

- Company: educational, ~500 employees (profile altered for data protection)

- Exchange rate: 78.2 rubles/$ — Central Bank of Russia as of January 8, 2026

- Average salary: 80,000 rubles/month — calculated as payroll/headcount

Data excludes January 2026 — New Year holidays, incomplete week, 1,112 requests from 47 people. Outliers would have distorted the picture.

Conclusions

AI for 500 people costs less than one annual salary. Less than one!

85% of companies don't do this and get their budgets wrong by 10%+. 95% of AI pilots don't show ROI.

That's what it looks like!